1 Introduction

1.1 Learning objectives

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Pellentesque laoreet tortor nec eros mollis aliquam id eu libero. Aenean ac elit ex. Sed sit amet sagittis erat. Donec ornare arcu sed eros pharetra finibus. Fusce pharetra lacus iaculis, volutpat felis vel, tristique diam. Sed a leo vestibulum, rutrum libero quis, dapibus ex. Ut venenatis felis et facilisis blandit. Sed eu porttitor tellus. Maecenas feugiat congue malesuada. Phasellus in sem lectus. Proin commodo lobortis nibh, sed blandit metus venenatis in. Etiam sit amet lacus eget metus egestas congue vitae eu dolor. Integer ultrices malesuada nulla sed sollicitudin. Mauris commodo nulla mauris, sed luctus nulla posuere sit amet. Mauris sodales nisl lacus, et pretium erat sollicitudin ac.

1.2 Tools

1.2.1 Excalidraw

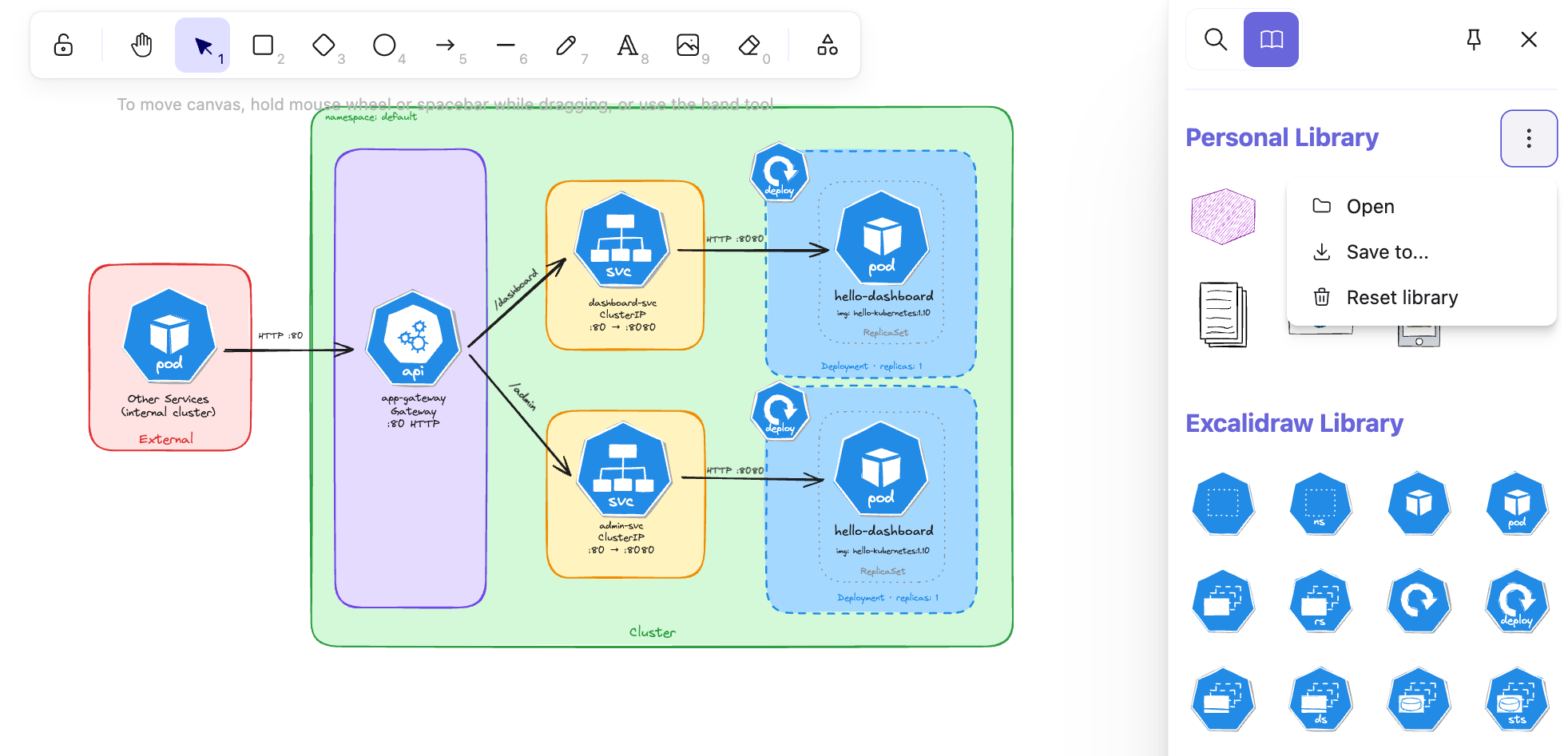

Throughout this course, we will design and visualize many Kubernetes architectures before implementing them, and Excalidraw is the tool we chose for the job. It is an open-source virtual whiteboard that produces clean, hand-drawn-style sketches and runs entirely in the browser with no installation required.

However, if you prefer to work inside your editor, Excalidraw is also available as an extension for the most popular IDEs:

- VS Code: Excalidraw Editor on the Visual Studio Marketplace.

- JetBrains IDEs (IntelliJ, WebStorm, GoLand, CLion, etc.): Excalidraw Integration on the JetBrains Marketplace.

We use Excalidraw to design and visualize Kubernetes architectures before implementing them. Each chapter includes the source .excalidraw file alongside the exported PNG.

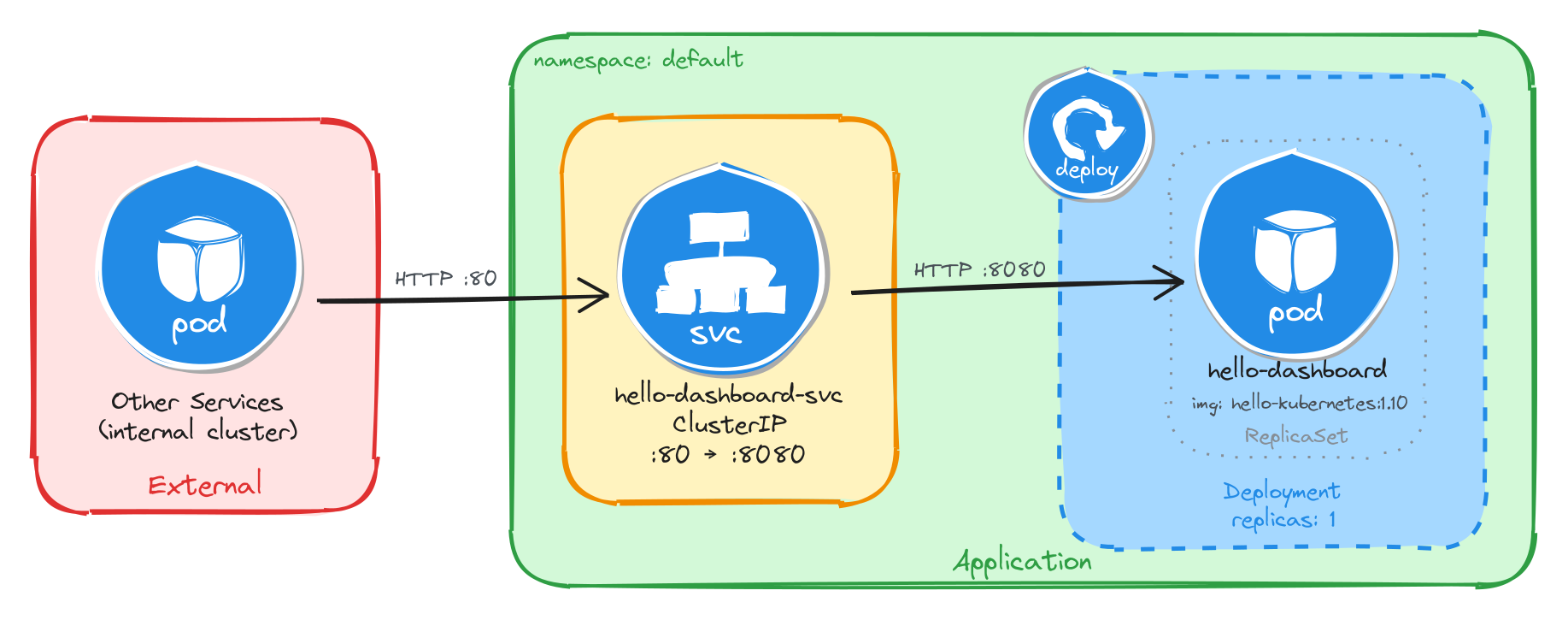

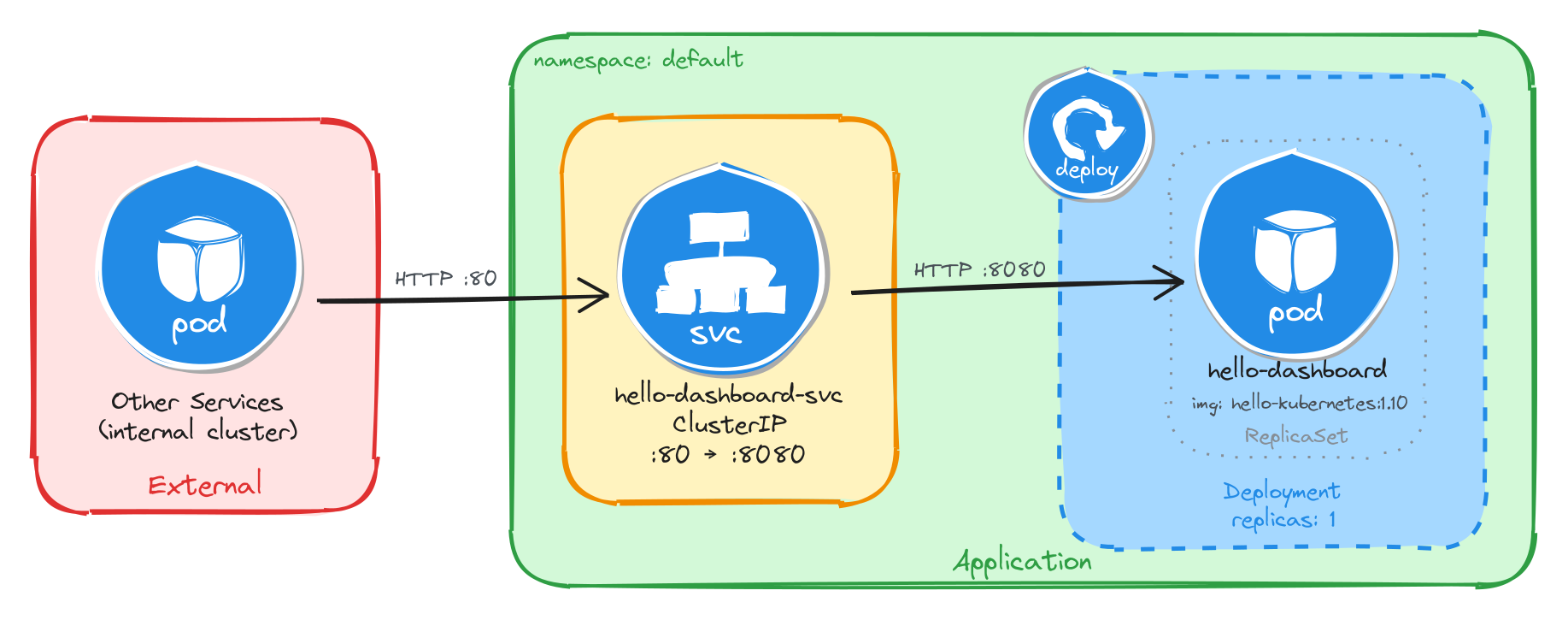

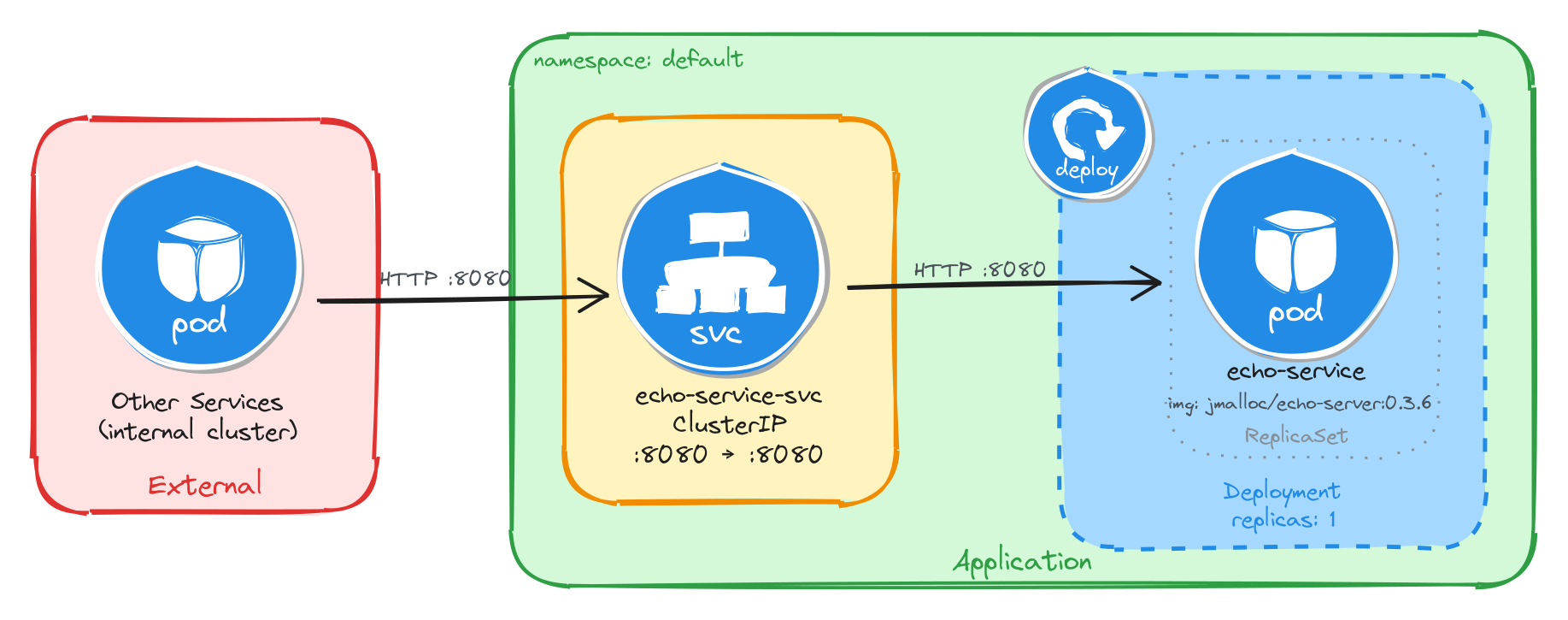

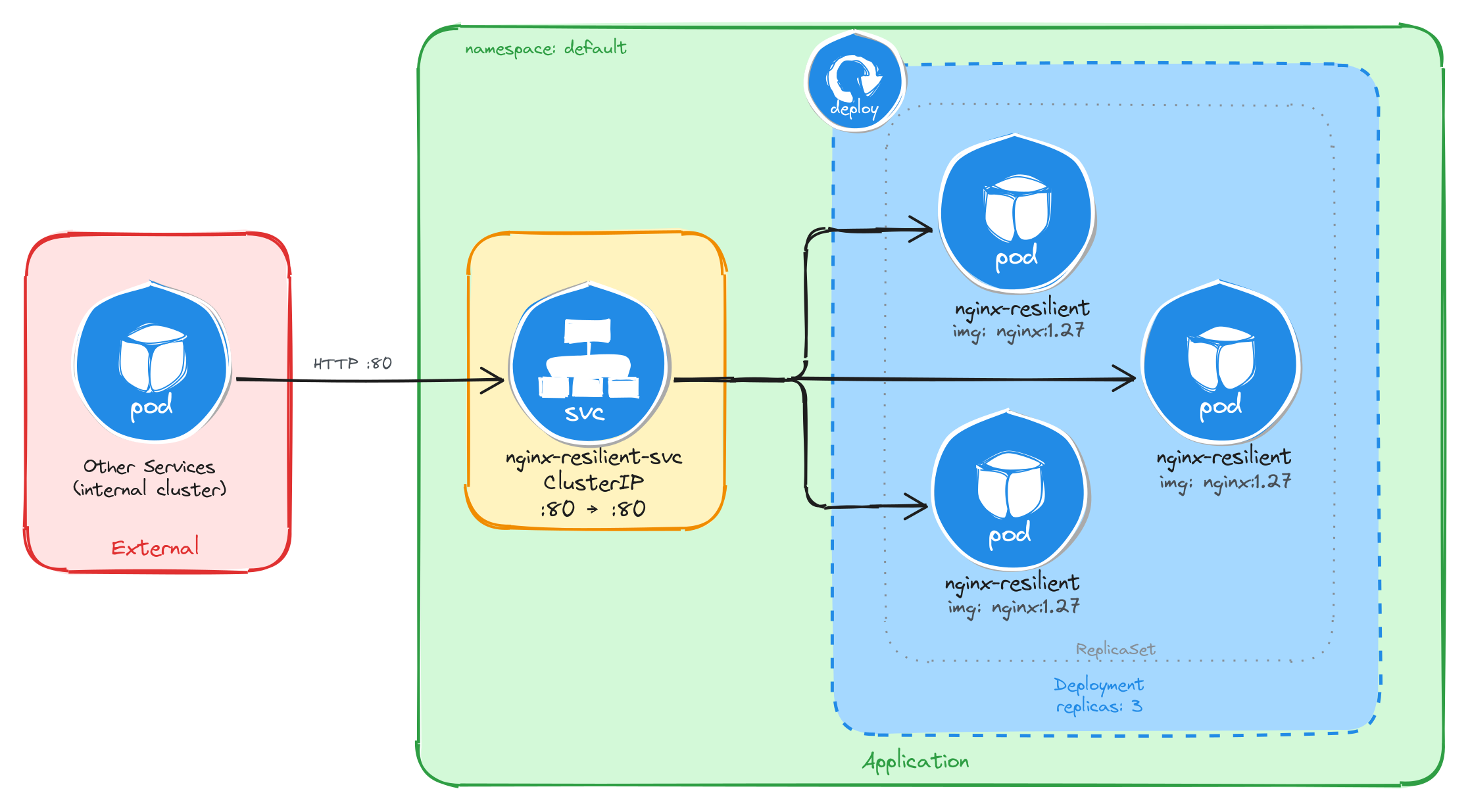

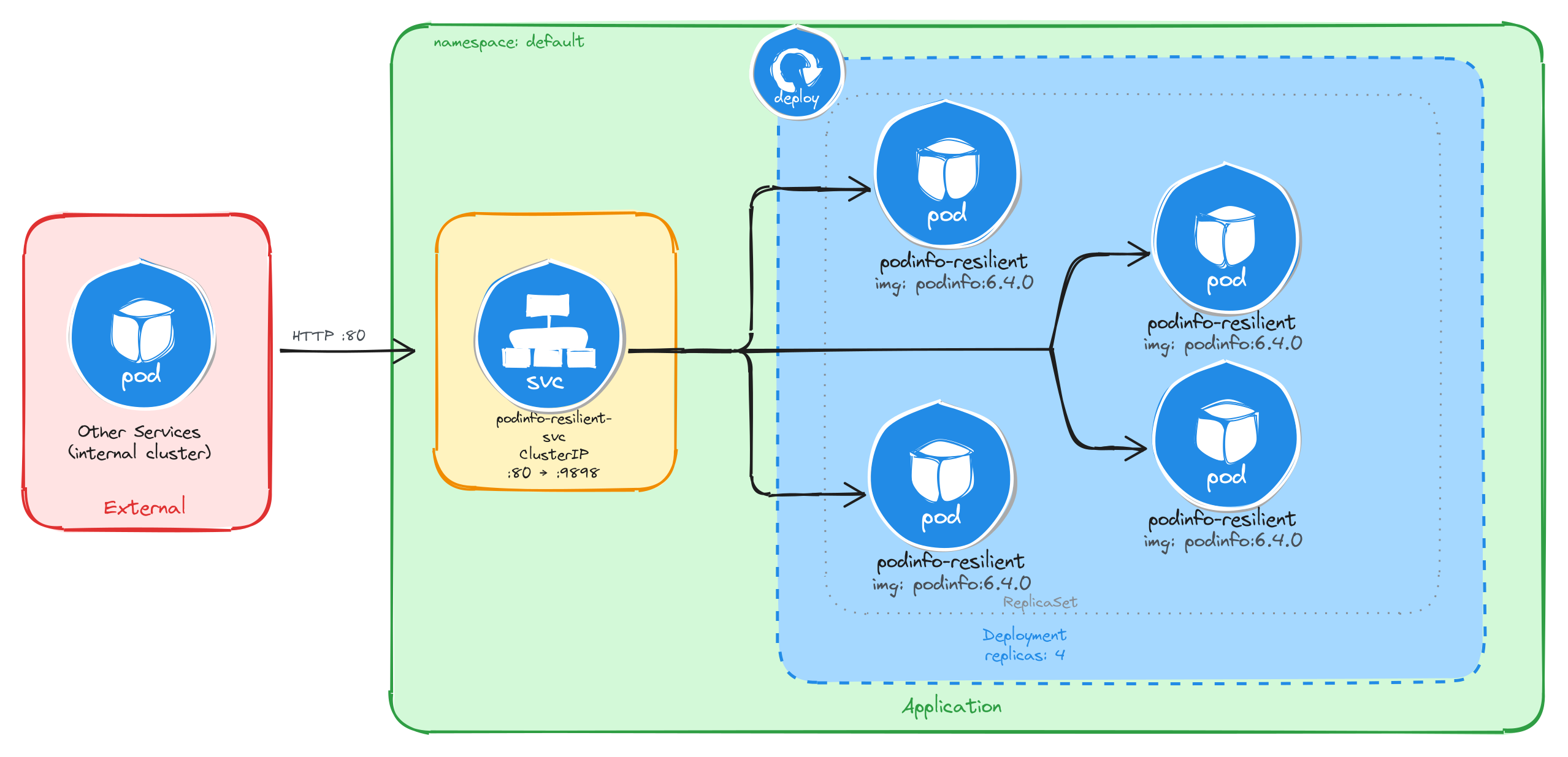

As an example, here is the architecture diagram for a Deployment exposed through a ClusterIP Service, reachable only from inside the cluster:

1.2.1.1 How to install Kubernetes icons in Excalidraw

In your local editor, open any .excalidraw file, then click Open in the right panel and select the .excalidrawlib file you want to import. The library will be added to your asset list, and you can start using the icons in your diagrams right away.

We used the Kubernetes Icons library for our diagrams, but feel free to explore other libraries or create your own!

The image below shows the import process in VS Code, but the steps are identical in the browser.

1.2.2 Killercoda

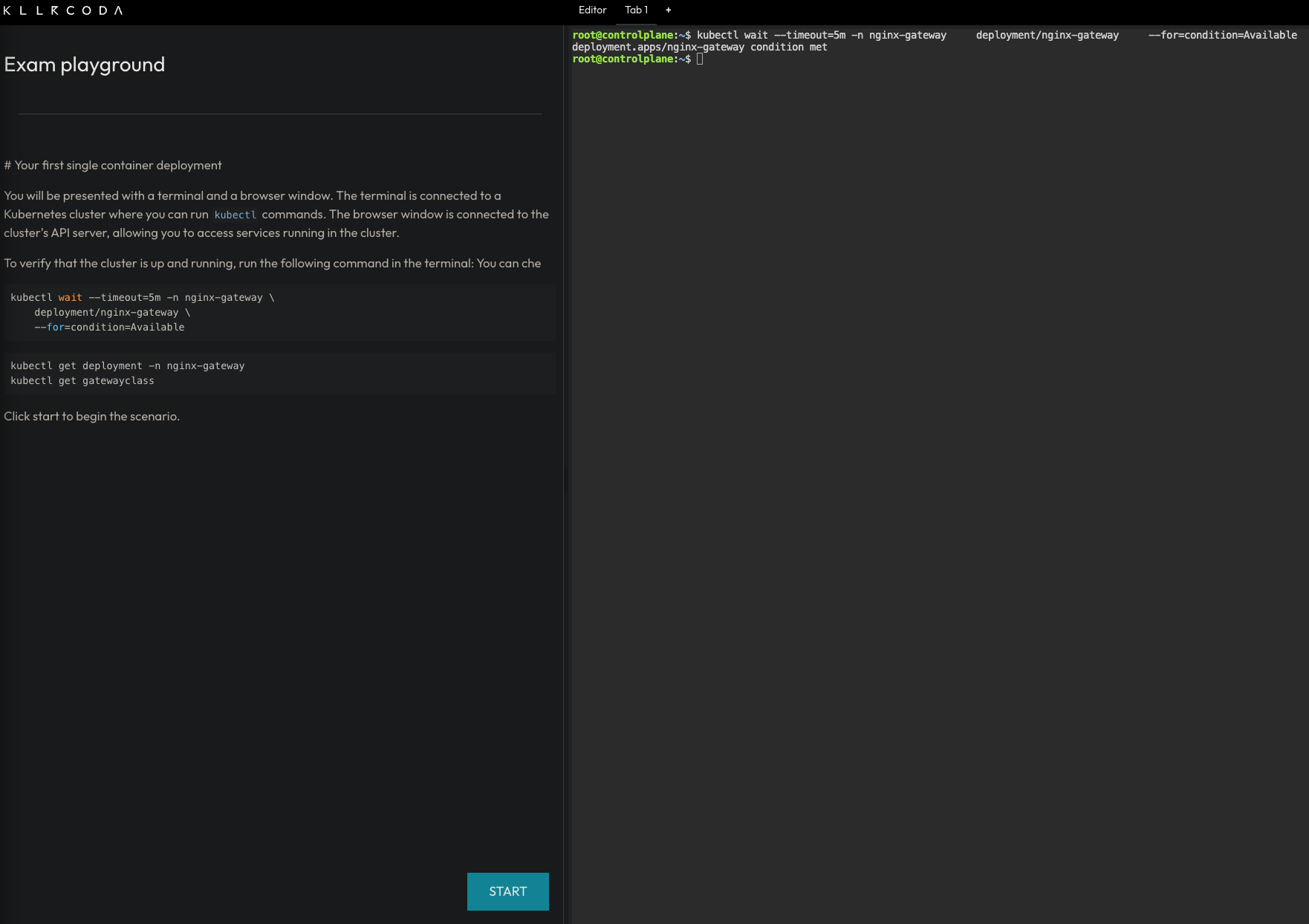

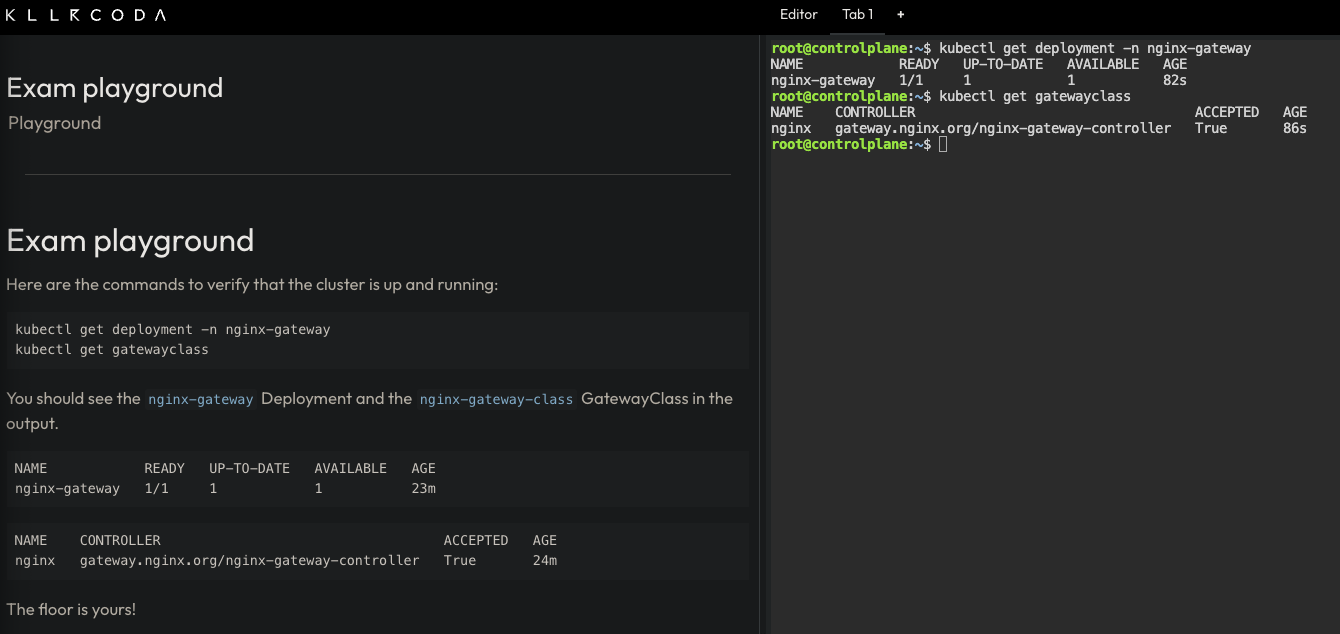

The best way to learn the tools used in this course is to use them hands-on in a safe, interactive environment with no local setup required. This is why we chose Killercoda as our playground:

Killercoda is a platform for learning and practicing skills in a safe and interactive environment. It provides hands-on experience with real-world tools and techniques, allowing users to develop their skills and knowledge in a practical way.

Killercoda offers a wide range of scenarios for various topics and skill levels. For this course specifically, we created a custom playground that includes all the tools and resources needed to complete the tasks. You can access it at https://killercoda.com/isislab/scenario/exam-playground.

1.2.2.1 How to use the Killercoda playground

Navigate to https://killercoda.com/isislab/scenario/exam-playground and start the scenario. This will provision a Kubernetes cluster and deploy all the resources needed for the tasks.

Once the setup completes, you will have a personal playground instance with a running Kubernetes cluster and a terminal with all the necessary tools pre-installed. Use this terminal to run kubectl commands and interact with the cluster as you work through the tasks.

1.2.3 Busybox

Busybox is a minimal Linux image that bundles many common Unix utilities into a single small executable. It is widely used in container environments where image size matters and a full OS is not needed.

In this course, we use Busybox as a lightweight Pod to run quick diagnostic commands inside the cluster without deploying a full application container. For example, checking network connectivity, resolving DNS, or inspecting environment variables.

To get a feel for it, you can run a Busybox container locally with Docker and explore the tools it provides:

docker run -it --rm busybox sh

This starts an interactive shell inside a Busybox container. From there, you can run commands like wget, ping, or env. These are the same utilities you will use later inside Kubernetes Pods.

1.3 How to contribute via GitHub

We welcome all kinds of contributions: bug fixes, content improvements, and suggestions for new exercises or topics. The project is fully hosted on GitHub. See CONTRIBUTING.md for setup instructions and the contribution workflow.

1.3.1 Adding a new topic

Create a Markdown file in the src directory and add an entry for it in SUMMARY.md.

Chapter, section, and subsection numbering is handled automatically by the preprocessor in book.toml. For example, # maps to 1., ## to 1.1., and ### to 1.1.1..

1.3.2 Adding a task to an existing topic

Add a new section at the appropriate heading level and follow the format of the existing tasks in that file.

1.3.3 Adding diagrams

Draw your diagram in Excalidraw and place the source .excalidraw file in src/diagrams. The build process will export it as a PNG to src/diagrams_images, which you can then reference in your Markdown file.

2 Structure of tasks

Every task in this book follows the same three-part structure: a scenario that sets the context, an architectural design that justifies the solution, and an implementation that walks through the commands.

2.1 Scenario

Each task opens with a short scenario describing what the team needs. The scenario establishes the functional requirement (what the application does), the container image to use, the resilience expectations (whether brief downtime is acceptable or not), and the accessibility constraints (internal-only, externally reachable, etc.). These constraints are what drive the architectural decisions that follow.

2.2 Architectural design

The architectural design section translates the scenario constraints into concrete design decisions. Each decision is linked to a specific constraint and to the Kubernetes resource that satisfies it. For example, if the task allows brief downtime, this section explains why a single-replica Deployment is sufficient. If the application must be reachable only from inside the cluster, it explains why a ClusterIP Service is the right choice and why no Ingress or Gateway is needed.

This section also includes an architecture diagram that shows the resulting resource topology: how external and internal clients interact (or do not interact) with the application, and how traffic flows from the Service into the Pod managed by the Deployment.

2.3 Implementation

The implementation section provides the step-by-step commands to deploy the solution. It is organized into three parts:

-

Resource creation: The main

kubectlcommands to create the Kubernetes resources required to implement the architectural design. Each command is explained: why a particular flag or value was chosen, and how it connects back to the architectural design. Where useful, a--dry-run=client -o yamlvariant of the command is included so the reader can inspect the generated YAML before applying it. -

Verify resource creation:: A list of commands to confirm that the resources were created correctly. This typically includes checking things like whether a Pod is running or a Service has the expected type, ports, and no unintended external IP.

-

Test the application: A practical test that validates end-to-end connectivity. This usually involves creating a temporary Pod (such as busybox) inside the cluster and using

wgetto send a request to the Service. The expected response is shown so you can confirm that the application is working as intended.

3 Single-container deployment

Design and deploy a simple single-container application with a service for internal access.

This category includes the following learning objectives:

- Understanding of Pods.

- Understanding of Deployments.

- Understanding of ClusterIP services.

3.1 Task 1: Design and deploy an internal dashboard

Your team needs an internal monitoring dashboard that runs inside the cluster and shows, at any time, the node and namespace they are working in.

The dashboard must be packaged as a single container image (hello-kubernetes dashboard). It does not need to be highly resilient, since brief periods of unavailability are acceptable.

However, other services inside the cluster need a stable address to reach it, so Pod IPs alone are not enough. Make sure the dashboard is strictly for internal use and not accessible from outside the cluster.

3.1.1 Architectural design

The task requires a single container image, brief downtime is acceptable, and the dashboard must be reachable only from inside the cluster. These constraints drive three design decisions:

-

Because the application is a single container, a Deployment with one replica is enough. The Deployment creates a ReplicaSet that manages the Pod. If the Pod crashes, the ReplicaSet recreates it automatically at the cost of a short period of unavailability, which the task explicitly allows.

-

Other services need a stable address to reach the dashboard. Pod IPs change every time a Pod is recreated, so we place a ClusterIP Service (

hello-dashboard-svc) in front of the Pod. The Service provides a fixed cluster-internal DNS name and load-balances traffic to the Pod. It accepts requests on port80and forwards them to the container’s port8080. -

The dashboard must not be accessible from outside the cluster. A ClusterIP Service has no external port and no route from outside the cluster network, so it satisfies this requirement by design. No Gateway, Ingress, or NodePort is needed.

The diagram shows the resulting architecture: external clients have no path into the application, while internal services reach the dashboard through the ClusterIP Service, which forwards traffic into the Pod managed by the Deployment.

3.1.2 Implementation

We start by creating a Deployment with a single replica (the default). The task allows short periods of unavailability, so one instance is enough. We use the paulbouwer/hello-kubernetes:1.10 image and declare that the container listens on port 8080. The kubectl create deployment command automatically adds the label app=hello-dashboard to the Pods, which will be useful later when we create the Service.

kubectl create deployment hello-dashboard \

--image=paulbouwer/hello-kubernetes:1.10 \

--port=8080

To inspect the YAML that would be applied without actually creating the resource, use the --dry-run=client -o yaml flags:

kubectl create deployment hello-dashboard \

--image=paulbouwer/hello-kubernetes:1.10 \

--port=8080 \

--dry-run=client -o yaml

The output should look similar to this:

apiVersion: apps/v1

kind: Deployment

metadata:

creationTimestamp: null

labels:

app: hello-dashboard

name: hello-dashboard

spec:

replicas: 1

selector:

matchLabels:

app: hello-dashboard

strategy: {}

template:

metadata:

creationTimestamp: null

labels:

app: hello-dashboard

spec:

containers:

- image: paulbouwer/hello-kubernetes:1.10

name: hello-kubernetes

ports:

- containerPort: 8080

resources: {}

status: {}

Next, we expose the Deployment as a ClusterIP Service. ClusterIP is the right choice here because it gives other services inside the cluster a stable address for reaching the dashboard while keeping it inaccessible from outside.

We use kubectl expose instead of creating the Service manually with kubectl create service clusterip because it automatically sets the selector to match the Deployment Pods, which is exactly the wiring we need. The Service listens on port 80 and forwards traffic to the container port 8080.

kubectl expose deployment hello-dashboard \

--name=hello-dashboard-svc \

--type=ClusterIP \

--port=80 \

--target-port=8080

3.1.2.1 Verify resource creation

To verify that the Pod is running, execute the following command, which filters Pods by the app=hello-dashboard label automatically set by kubectl create deployment:

kubectl get pods -l app=hello-dashboard

The output should look similar to this:

NAME READY STATUS RESTARTS AGE

hello-dashboard-6bfbf8b67c-jv8tv 1/1 Running 0 16m

To verify that the Service is configured correctly, run:

kubectl get svc hello-dashboard-svc

The output should look similar to this:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

hello-dashboard-svc ClusterIP 10.111.28.77 <none> 80/TCP 15

From this output, we can confirm that internal access to the dashboard is available at http://hello-dashboard-svc:80 and that external access is not possible, since no external IP is assigned.

3.1.2.2 Test the dashboard

To test the dashboard, create a temporary Pod using busybox:

kubectl run -it --rm --restart=Never busybox --image=busybox sh

Inside the busybox Pod, use wget to access the dashboard through the Service ClusterIP. The dashboard should respond with an HTML page containing cluster information.

wget -qO- http://hello-dashboard-svc

The dashboard HTML should look similar to the example below:

<!DOCTYPE html>

<html>

<head>

<title>Hello Kubernetes!</title>

<!-- CSS styles omitted for brevity -->

</head>

<body>

<div class="main">

<!-- Content omitted for brevity -->

<div class="content">

<div id="message">Hello world!</div>

<div id="info">

<table>

<tr><th>namespace:</th><td>-</td></tr>

<tr><th>pod:</th><td>hello-dashboard-6bfbf8b67c-jv8tv</td></tr>

<tr><th>node:</th><td>- (Linux 6.8.0-94-generic)</td></tr>

</table>

</div>

</div>

</div>

</body>

</html>

3.2 Task 2: Design and deploy an internal request inspector

Your team needs an internal debugging tool that runs inside the cluster and displays HTTP request details such as headers, source IP, and hostname. This helps developers verify how traffic flows through the cluster.

The tool must be packaged as a single container image (traefik/whoami). It does not need to be highly resilient, since brief periods of unavailability are acceptable.

However, other services inside the cluster need a stable address to reach it, so Pod IPs alone are not enough. Make sure the tool is strictly for internal use and not accessible from outside the cluster.

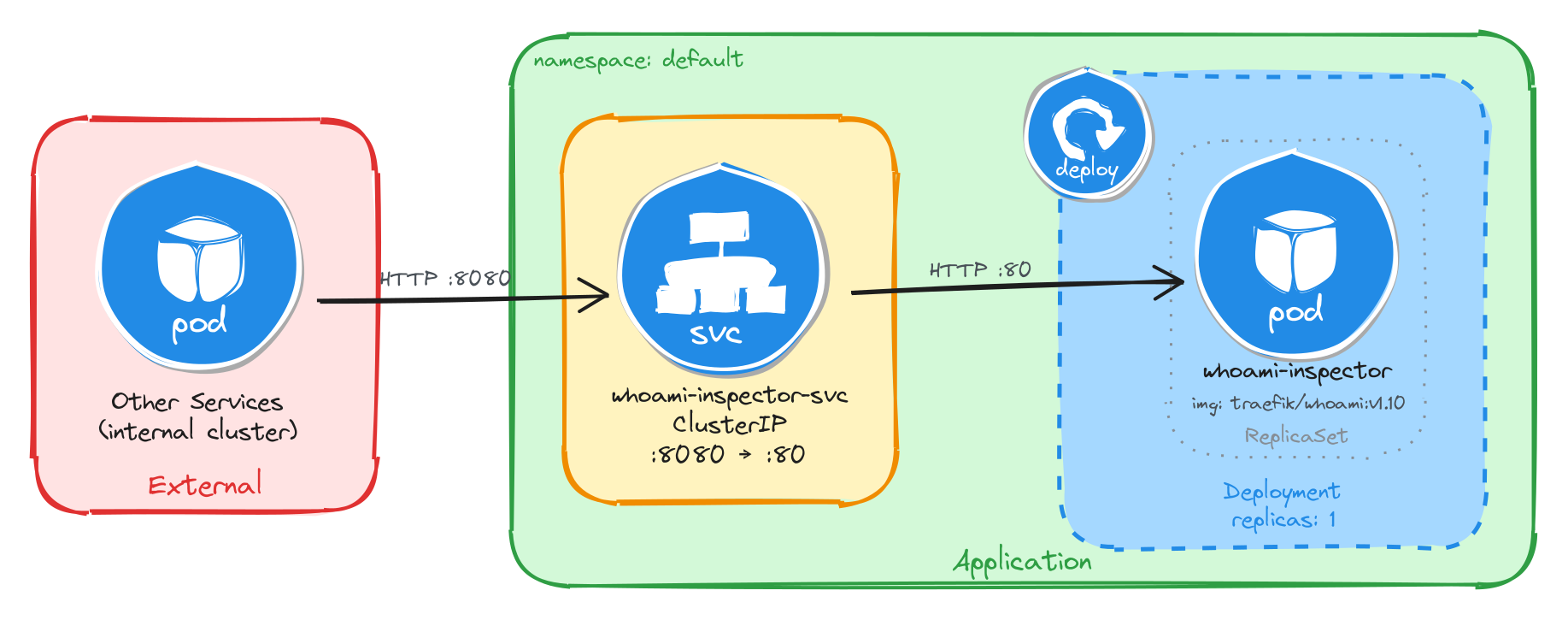

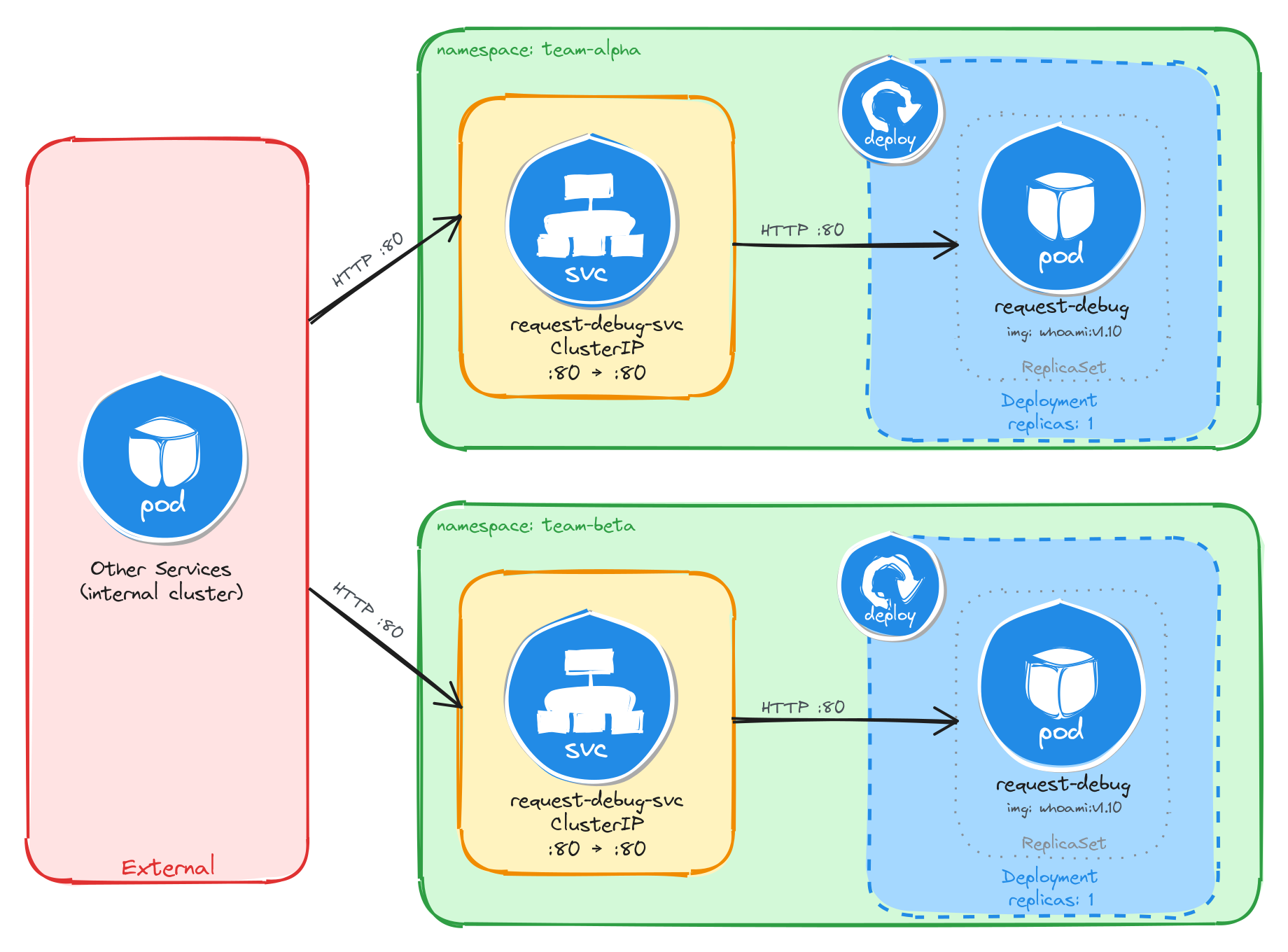

3.2.1 Architectural design

The task requires a single container image, brief downtime is acceptable, and the request inspector must be reachable only from inside the cluster. These constraints drive three design decisions:

-

Because the application is a single container, a Deployment with one replica is enough. The Deployment creates a ReplicaSet that manages the Pod. If the Pod crashes, the ReplicaSet recreates it automatically at the cost of a short period of unavailability, which the task explicitly allows.

-

Other services need a stable address to reach the request inspector. Pod IPs change every time a Pod is recreated, so we place a ClusterIP Service (

whoami-inspector-svc) in front of the Pod. The Service provides a fixed cluster-internal DNS name and load-balances traffic to the Pod. It accepts requests on port8080and forwards them to the container’s port80. -

The request inspector must not be accessible from outside the cluster. A ClusterIP Service has no external port and no route from outside the cluster network, so it satisfies this requirement by design. No Gateway, Ingress, or NodePort is needed.

The diagram shows the resulting architecture: external clients have no path into the application, while internal services reach the request inspector through the ClusterIP Service, which forwards traffic into the Pod managed by the Deployment.

3.2.2 Implementation

We start by creating a Deployment with a single replica (the default). The task allows short periods of unavailability, so one instance is enough. We use the traefik/whoami:v1.10 image and declare that the container listens on port 80. The kubectl create deployment command automatically adds the label app=whoami-inspector to the Pods, which will be useful later when we create the Service.

kubectl create deployment whoami-inspector \

--image=traefik/whoami:v1.10 \

--port=80

To inspect the YAML that would be applied without actually creating the resource, use the --dry-run=client -o yaml flags:

kubectl create deployment whoami-inspector \

--image=traefik/whoami:v1.10 \

--port=80 \

--dry-run=client -o yaml

The output should look similar to this:

apiVersion: apps/v1

kind: Deployment

metadata:

creationTimestamp: null

labels:

app: whoami-inspector

name: whoami-inspector

spec:

replicas: 1

selector:

matchLabels:

app: whoami-inspector

strategy: {}

template:

metadata:

creationTimestamp: null

labels:

app: whoami-inspector

spec:

containers:

- image: traefik/whoami:v1.10

name: whoami

ports:

- containerPort: 80

resources: {}

status: {}

Next, we expose the Deployment as a ClusterIP Service. ClusterIP is the right choice here because it gives other services inside the cluster a stable address for reaching the request inspector while keeping it inaccessible from outside.

We use kubectl expose instead of creating the Service manually with kubectl create service clusterip because it automatically sets the selector to match the Deployment Pods, which is exactly the wiring we need. The Service listens on port 8080 and forwards traffic to the container port 80.

kubectl expose deployment whoami-inspector \

--name=whoami-inspector-svc \

--type=ClusterIP \

--port=8080 \

--target-port=80

3.2.2.1 Verify resource creation

To verify that the Pod is running, execute the following command, which filters Pods by the app=whoami-inspector label automatically set by kubectl create deployment:

kubectl get pods -l app=whoami-inspector

The output should look similar to this:

NAME READY STATUS RESTARTS AGE

whoami-inspector-5f4b8d7c9a-k2m7p 1/1 Running 0 12m

To verify that the Service is configured correctly, run:

kubectl get svc whoami-inspector-svc

The output should look similar to this:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

whoami-inspector-svc ClusterIP 10.96.145.203 <none> 8080/TCP 10m

From this output, we can confirm that internal access to the request inspector is available at http://whoami-inspector-svc:8080 and that external access is not possible, since no external IP is assigned.

3.2.2.2 Test the request inspector

To test the request inspector, create a temporary Pod using busybox:

kubectl run -it --rm --restart=Never busybox --image=busybox sh

Inside the busybox Pod, use wget to access the request inspector through the Service ClusterIP. The tool should respond with plain text showing HTTP request details.

wget -qO- http://whoami-inspector-svc:8080

The response should look similar to the example below:

Hostname: whoami-inspector-5f4b8d7c9a-k2m7p

IP: 127.0.0.1

IP: 10.244.0.12

RemoteAddr: 10.244.0.1:48372

GET / HTTP/1.1

Host: whoami-inspector-svc:8080

User-Agent: Wget

3.3 Task 3: Design and deploy an internal health endpoint

Your team needs an internal health endpoint that runs inside the cluster and returns pod metadata in JSON format. This helps the platform team verify cluster connectivity and inspect runtime information about running workloads.

The endpoint must be packaged as a single container image (podinfo). It does not need to be highly resilient, since brief periods of unavailability are acceptable.

However, other services inside the cluster need a stable address to reach it, so Pod IPs alone are not enough. Make sure the endpoint is strictly for internal use and not accessible from outside the cluster.

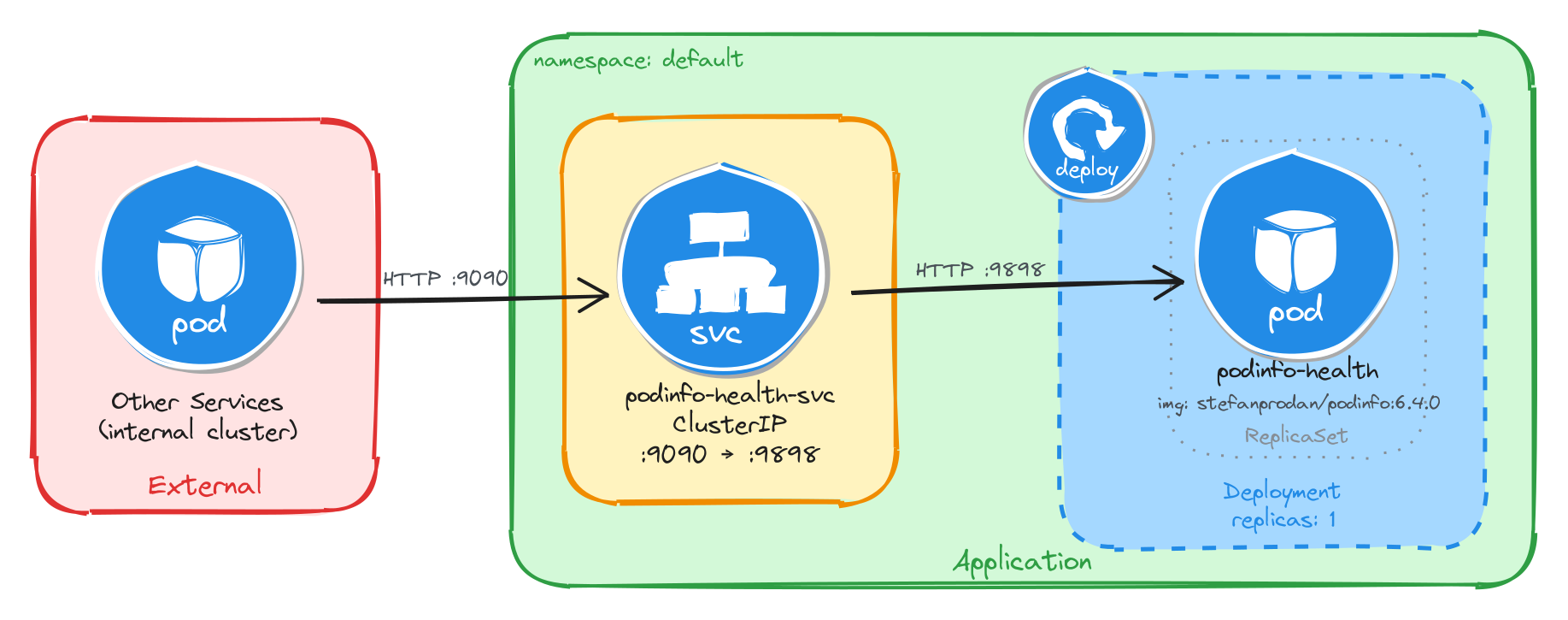

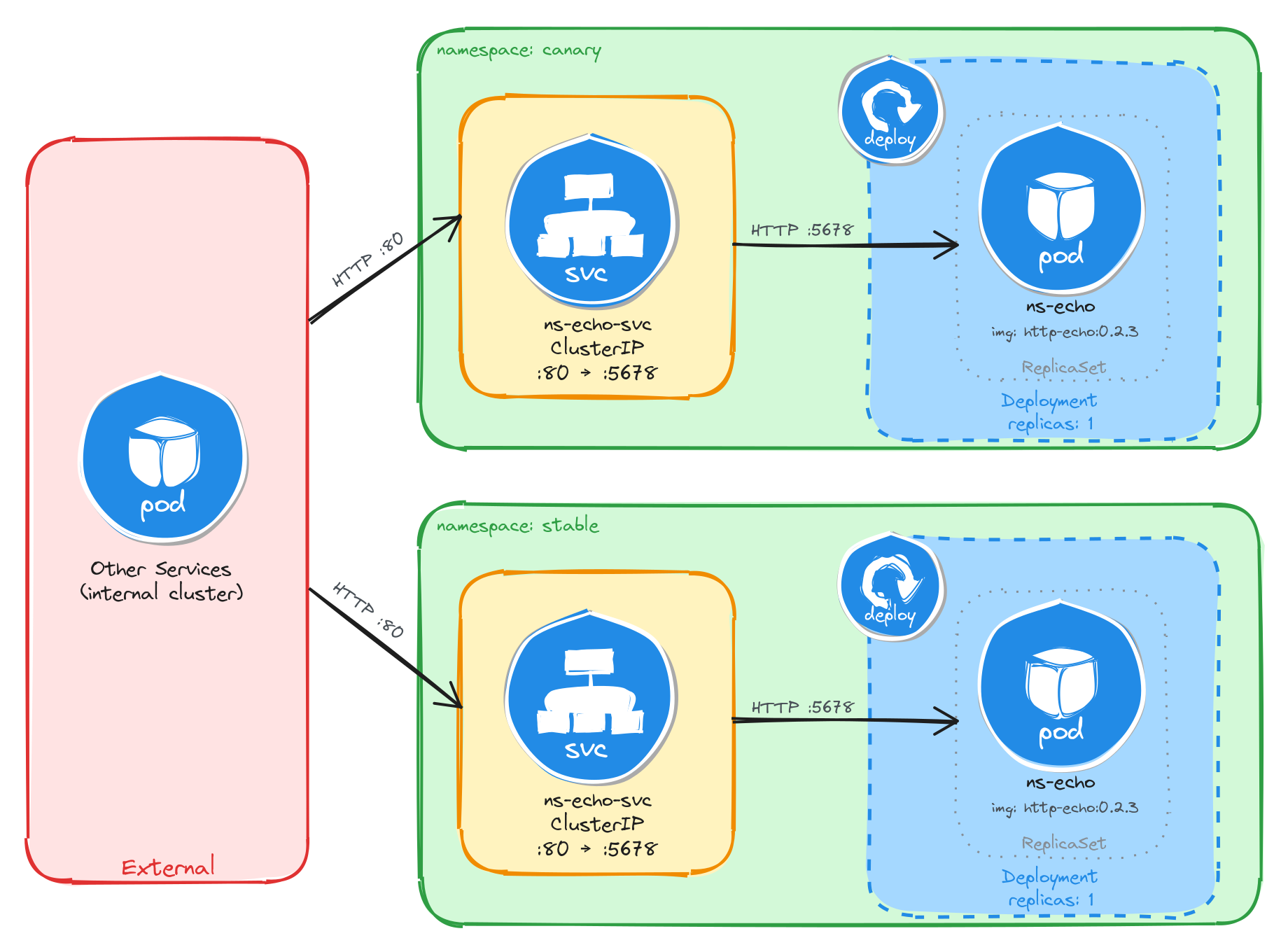

3.3.1 Architectural design

The task requires a single container image, brief downtime is acceptable, and the health endpoint must be reachable only from inside the cluster. These constraints drive three design decisions:

-

Because the application is a single container, a Deployment with one replica is enough. The Deployment creates a ReplicaSet that manages the Pod. If the Pod crashes, the ReplicaSet recreates it automatically at the cost of a short period of unavailability, which the task explicitly allows.

-

Other services need a stable address to reach the health endpoint. Pod IPs change every time a Pod is recreated, so we place a ClusterIP Service (

podinfo-health-svc) in front of the Pod. The Service provides a fixed cluster-internal DNS name and load-balances traffic to the Pod. It accepts requests on port9090and forwards them to the container’s port9898. -

The health endpoint must not be accessible from outside the cluster. A ClusterIP Service has no external port and no route from outside the cluster network, so it satisfies this requirement by design. No Gateway, Ingress, or NodePort is needed.

The diagram shows the resulting architecture: external clients have no path into the application, while internal services reach the health endpoint through the ClusterIP Service, which forwards traffic into the Pod managed by the Deployment.

3.3.2 Implementation

We start by creating a Deployment with a single replica (the default). The task allows short periods of unavailability, so one instance is enough. We use the stefanprodan/podinfo:6.4.0 image and declare that the container listens on port 9898. The kubectl create deployment command automatically adds the label app=podinfo-health to the Pods, which will be useful later when we create the Service.

kubectl create deployment podinfo-health \

--image=stefanprodan/podinfo:6.4.0 \

--port=9898

To inspect the YAML that would be applied without actually creating the resource, use the --dry-run=client -o yaml flags:

kubectl create deployment podinfo-health \

--image=stefanprodan/podinfo:6.4.0 \

--port=9898 \

--dry-run=client -o yaml

The output should look similar to this:

apiVersion: apps/v1

kind: Deployment

metadata:

creationTimestamp: null

labels:

app: podinfo-health

name: podinfo-health

spec:

replicas: 1

selector:

matchLabels:

app: podinfo-health

strategy: {}

template:

metadata:

creationTimestamp: null

labels:

app: podinfo-health

spec:

containers:

- image: stefanprodan/podinfo:6.4.0

name: podinfo

ports:

- containerPort: 9898

resources: {}

status: {}

Next, we expose the Deployment as a ClusterIP Service. ClusterIP is the right choice here because it gives other services inside the cluster a stable address for reaching the health endpoint while keeping it inaccessible from outside.

We use kubectl expose instead of creating the Service manually with kubectl create service clusterip because it automatically sets the selector to match the Deployment Pods, which is exactly the wiring we need. The Service listens on port 9090 and forwards traffic to the container port 9898.

kubectl expose deployment podinfo-health \

--name=podinfo-health-svc \

--type=ClusterIP \

--port=9090 \

--target-port=9898

3.3.2.1 Verify resource creation

To verify that the Pod is running, execute the following command, which filters Pods by the app=podinfo-health label automatically set by kubectl create deployment:

kubectl get pods -l app=podinfo-health

The output should look similar to this:

NAME READY STATUS RESTARTS AGE

podinfo-health-7d6c8b4f59-r3n8x 1/1 Running 0 8m

To verify that the Service is configured correctly, run:

kubectl get svc podinfo-health-svc

The output should look similar to this:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

podinfo-health-svc ClusterIP 10.104.72.186 <none> 9090/TCP 6m

From this output, we can confirm that internal access to the health endpoint is available at http://podinfo-health-svc:9090 and that external access is not possible, since no external IP is assigned.

3.3.2.2 Test the health endpoint

To test the health endpoint, create a temporary Pod using busybox:

kubectl run -it --rm --restart=Never busybox --image=busybox sh

Inside the busybox Pod, use wget to access the health endpoint through the Service ClusterIP. The endpoint should respond with a JSON payload containing pod metadata.

wget -qO- http://podinfo-health-svc:9090

The response should look similar to the example below:

{

"hostname": "podinfo-health-7d6c8b4f59-r3n8x",

"version": "6.4.0",

"revision": "",

"color": "#34577c",

"logo": "https://raw.githubusercontent.com/stefanprodan/podinfo/gh-pages/cuddle_clap.gif",

"message": "greetings from podinfo v6.4.0",

"goos": "linux",

"goarch": "amd64",

"runtime": "go1.21.0",

"num_goroutine": "8",

"num_cpu": "2"

}

3.4 Task 4: Design and deploy an internal welcome page

Your team needs an internal welcome page that runs inside the cluster and displays server information such as the server address, server name, and request URI. This helps developers quickly confirm that routing and DNS resolution are working correctly.

The welcome page must be packaged as a single container image (nginxdemos/hello). It does not need to be highly resilient, since brief periods of unavailability are acceptable.

However, other services inside the cluster need a stable address to reach it, so Pod IPs alone are not enough. Make sure the welcome page is strictly for internal use and not accessible from outside the cluster.

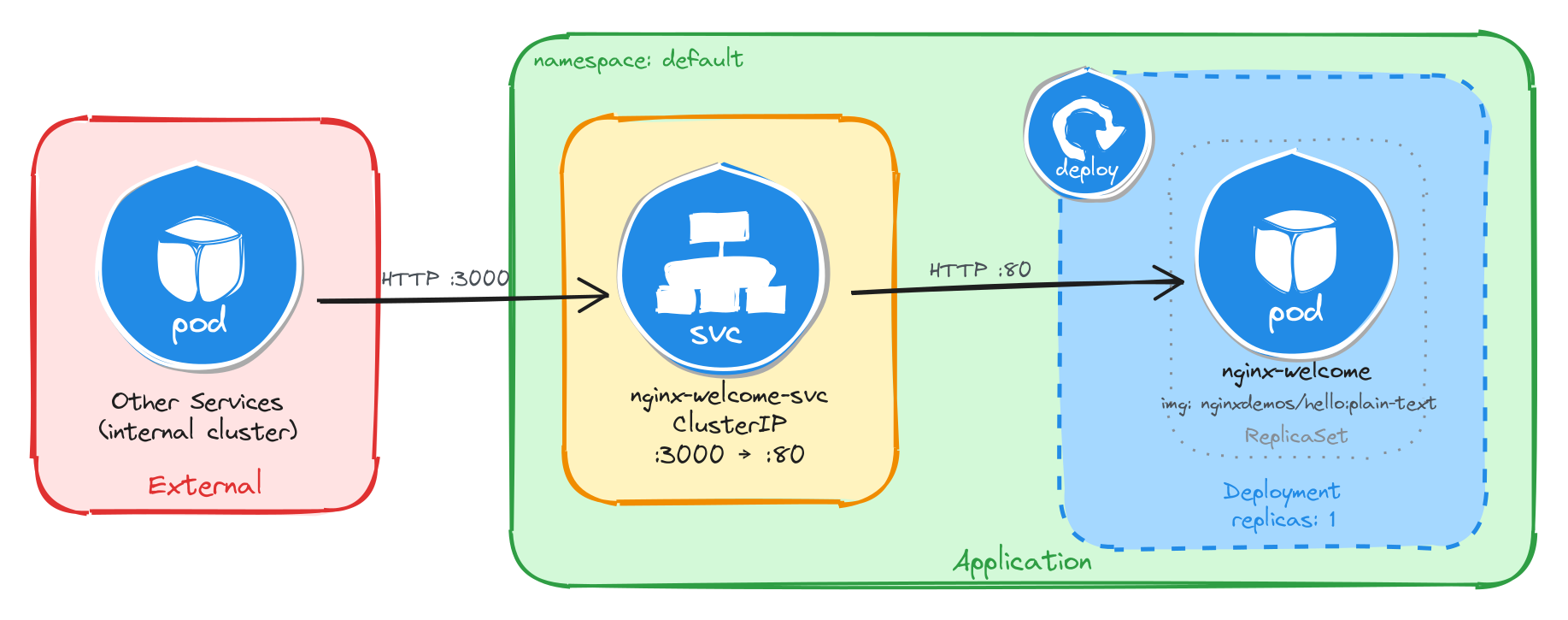

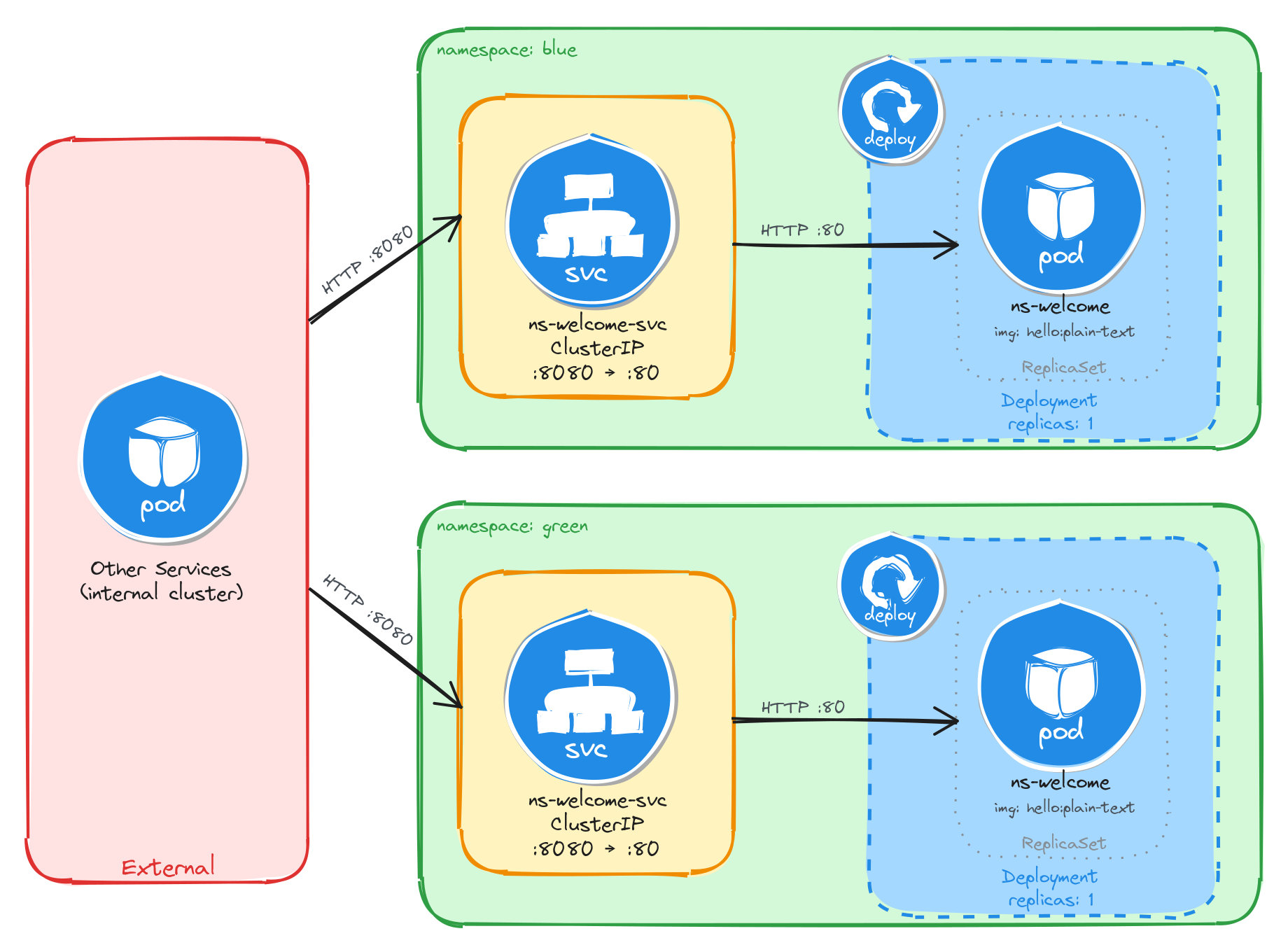

3.4.1 Architectural design

The task requires a single container image, brief downtime is acceptable, and the welcome page must be reachable only from inside the cluster. These constraints drive three design decisions:

-

Because the application is a single container, a Deployment with one replica is enough. The Deployment creates a ReplicaSet that manages the Pod. If the Pod crashes, the ReplicaSet recreates it automatically at the cost of a short period of unavailability, which the task explicitly allows.

-

Other services need a stable address to reach the welcome page. Pod IPs change every time a Pod is recreated, so we place a ClusterIP Service (

nginx-welcome-svc) in front of the Pod. The Service provides a fixed cluster-internal DNS name and load-balances traffic to the Pod. It accepts requests on port3000and forwards them to the container’s port80. -

The welcome page must not be accessible from outside the cluster. A ClusterIP Service has no external port and no route from outside the cluster network, so it satisfies this requirement by design. No Gateway, Ingress, or NodePort is needed.

The diagram shows the resulting architecture: external clients have no path into the application, while internal services reach the welcome page through the ClusterIP Service, which forwards traffic into the Pod managed by the Deployment.

3.4.2 Implementation

We start by creating a Deployment with a single replica (the default). The task allows short periods of unavailability, so one instance is enough. We use the nginxdemos/hello:plain-text image and declare that the container listens on port 80. The kubectl create deployment command automatically adds the label app=nginx-welcome to the Pods, which will be useful later when we create the Service.

kubectl create deployment nginx-welcome \

--image=nginxdemos/hello:plain-text \

--port=80

To inspect the YAML that would be applied without actually creating the resource, use the --dry-run=client -o yaml flags:

kubectl create deployment nginx-welcome \

--image=nginxdemos/hello:plain-text \

--port=80 \

--dry-run=client -o yaml

The output should look similar to this:

apiVersion: apps/v1

kind: Deployment

metadata:

creationTimestamp: null

labels:

app: nginx-welcome

name: nginx-welcome

spec:

replicas: 1

selector:

matchLabels:

app: nginx-welcome

strategy: {}

template:

metadata:

creationTimestamp: null

labels:

app: nginx-welcome

spec:

containers:

- image: nginxdemos/hello:plain-text

name: hello

ports:

- containerPort: 80

resources: {}

status: {}

Next, we expose the Deployment as a ClusterIP Service. ClusterIP is the right choice here because it gives other services inside the cluster a stable address for reaching the welcome page while keeping it inaccessible from outside.

We use kubectl expose instead of creating the Service manually with kubectl create service clusterip because it automatically sets the selector to match the Deployment Pods, which is exactly the wiring we need. The Service listens on port 3000 and forwards traffic to the container port 80.

kubectl expose deployment nginx-welcome \

--name=nginx-welcome-svc \

--type=ClusterIP \

--port=3000 \

--target-port=80

3.4.2.1 Verify resource creation

To verify that the Pod is running, execute the following command, which filters Pods by the app=nginx-welcome label automatically set by kubectl create deployment:

kubectl get pods -l app=nginx-welcome

The output should look similar to this:

NAME READY STATUS RESTARTS AGE

nginx-welcome-6c9d4f8b5a-t4w2q 1/1 Running 0 10m

To verify that the Service is configured correctly, run:

kubectl get svc nginx-welcome-svc

The output should look similar to this:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx-welcome-svc ClusterIP 10.98.231.114 <none> 3000/TCP 8m

From this output, we can confirm that internal access to the welcome page is available at http://nginx-welcome-svc:3000 and that external access is not possible, since no external IP is assigned.

3.4.2.2 Test the welcome page

To test the welcome page, create a temporary Pod using busybox:

kubectl run -it --rm --restart=Never busybox --image=busybox sh

Inside the busybox Pod, use wget to access the welcome page through the Service ClusterIP. The page should respond with plain text showing server information.

wget -qO- http://nginx-welcome-svc:3000

The response should look similar to the example below:

Server address: 10.244.0.15:80

Server name: nginx-welcome-6c9d4f8b5a-t4w2q

Date: 25/Mar/2026:10:32:18 +0000

URI: /

Request ID: a1b2c3d4e5f6a7b8c9d0e1f2a3b4c5d6

3.5 Task 5: Design and deploy an internal echo service

Your team needs an internal echo service that runs inside the cluster and mirrors back the body of any HTTP request it receives. This helps developers test and validate payloads sent by other microservices without needing an external tool.

The echo service must be packaged as a single container image (jmalloc/echo-server). It does not need to be highly resilient, since brief periods of unavailability are acceptable.

However, other services inside the cluster need a stable address to reach it, so Pod IPs alone are not enough. Make sure the echo service is strictly for internal use and not accessible from outside the cluster.

3.5.1 Architectural design

The task requires a single container image, brief downtime is acceptable, and the echo service must be reachable only from inside the cluster. These constraints drive three design decisions:

-

Because the application is a single container, a Deployment with one replica is enough. The Deployment creates a ReplicaSet that manages the Pod. If the Pod crashes, the ReplicaSet recreates it automatically at the cost of a short period of unavailability, which the task explicitly allows.

-

Other services need a stable address to reach the echo service. Pod IPs change every time a Pod is recreated, so we place a ClusterIP Service (

echo-service-svc) in front of the Pod. The Service provides a fixed cluster-internal DNS name and load-balances traffic to the Pod. It accepts requests on port8080and forwards them to the container’s port8080. -

The echo service must not be accessible from outside the cluster. A ClusterIP Service has no external port and no route from outside the cluster network, so it satisfies this requirement by design. No Gateway, Ingress, or NodePort is needed.

The diagram shows the resulting architecture: external clients have no path into the application, while internal services reach the echo service through the ClusterIP Service, which forwards traffic into the Pod managed by the Deployment.

3.5.2 Implementation

We start by creating a Deployment with a single replica (the default). The task allows short periods of unavailability, so one instance is enough. We use the jmalloc/echo-server:0.3.7 image and declare that the container listens on port 8080. The kubectl create deployment command automatically adds the label app=echo-service to the Pods, which will be useful later when we create the Service.

kubectl create deployment echo-service \

--image=jmalloc/echo-server:0.3.6 \

--port=8080

To inspect the YAML that would be applied without actually creating the resource, use the --dry-run=client -o yaml flags:

kubectl create deployment echo-service \

--image=jmalloc/echo-server:0.3.6 \

--port=8080 \

--dry-run=client -o yaml

The output should look similar to this:

apiVersion: apps/v1

kind: Deployment

metadata:

creationTimestamp: null

labels:

app: echo-service

name: echo-service

spec:

replicas: 1

selector:

matchLabels:

app: echo-service

strategy: {}

template:

metadata:

creationTimestamp: null

labels:

app: echo-service

spec:

containers:

- image: jmalloc/echo-server:0.3.6

name: echo-server

ports:

- containerPort: 8080

resources: {}

status: {}

Next, we expose the Deployment as a ClusterIP Service. ClusterIP is the right choice here because it gives other services inside the cluster a stable address for reaching the echo service while keeping it inaccessible from outside.

We use kubectl expose instead of creating the Service manually with kubectl create service clusterip because it automatically sets the selector to match the Deployment Pods, which is exactly the wiring we need. The Service listens on port 8080 and forwards traffic to the container port 8080.

kubectl expose deployment echo-service \

--name=echo-service-svc \

--type=ClusterIP \

--port=8080 \

--target-port=8080

3.5.2.1 Verify resource creation

To verify that the Pod is running, execute the following command, which filters Pods by the app=echo-service label automatically set by kubectl create deployment:

kubectl get pods -l app=echo-service

The output should look similar to this:

NAME READY STATUS RESTARTS AGE

echo-service-5b7d9f6c48-m6k3p 1/1 Running 0 7m

To verify that the Service is configured correctly, run:

kubectl get svc echo-service-svc

The output should look similar to this:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

echo-service-svc ClusterIP 10.107.54.221 <none> 8080/TCP 5m

From this output, we can confirm that internal access to the echo service is available at http://echo-service-svc:8080 and that external access is not possible, since no external IP is assigned.

3.5.2.2 Test the echo service

To test the echo service, create a temporary Pod using busybox:

kubectl run -it --rm --restart=Never busybox --image=busybox sh

Inside the busybox Pod, use wget to send a request to the echo service through the Service ClusterIP. The service should echo back the request details.

wget -qO- http://echo-service-svc:8080

The response should look similar to the example below:

Request served by echo-service-5b7d9f6c48-m6k3p

HTTP/1.1 GET /

Host: echo-service-svc:8080

User-Agent: Wget

Connection: close

4 Multi-container deployment

Design and deploy a Pod with sidecar containers and a service for internal access.

This category includes the following learning objectives:

- Understanding of Pods.

- Understanding of Deployments.

- Knowledge of multi-container pod patterns and container lifecycle.

- Understanding of shared volumes between containers.

4.1 Task 1: Design and deploy a web server with a logging sidecar

Your team needs an internal web server that serves a static page inside the cluster. The operations team also requires real-time visibility into the access logs of the web server without having to exec into the running container.

The web server must run as an nginx container. A second container running busybox must act as a logging sidecar that continuously reads the nginx access log and prints it to its own standard output.

The web server must be reachable from other services inside the cluster through a stable address, but it must not be accessible from outside the cluster.

4.1.1 Architectural design

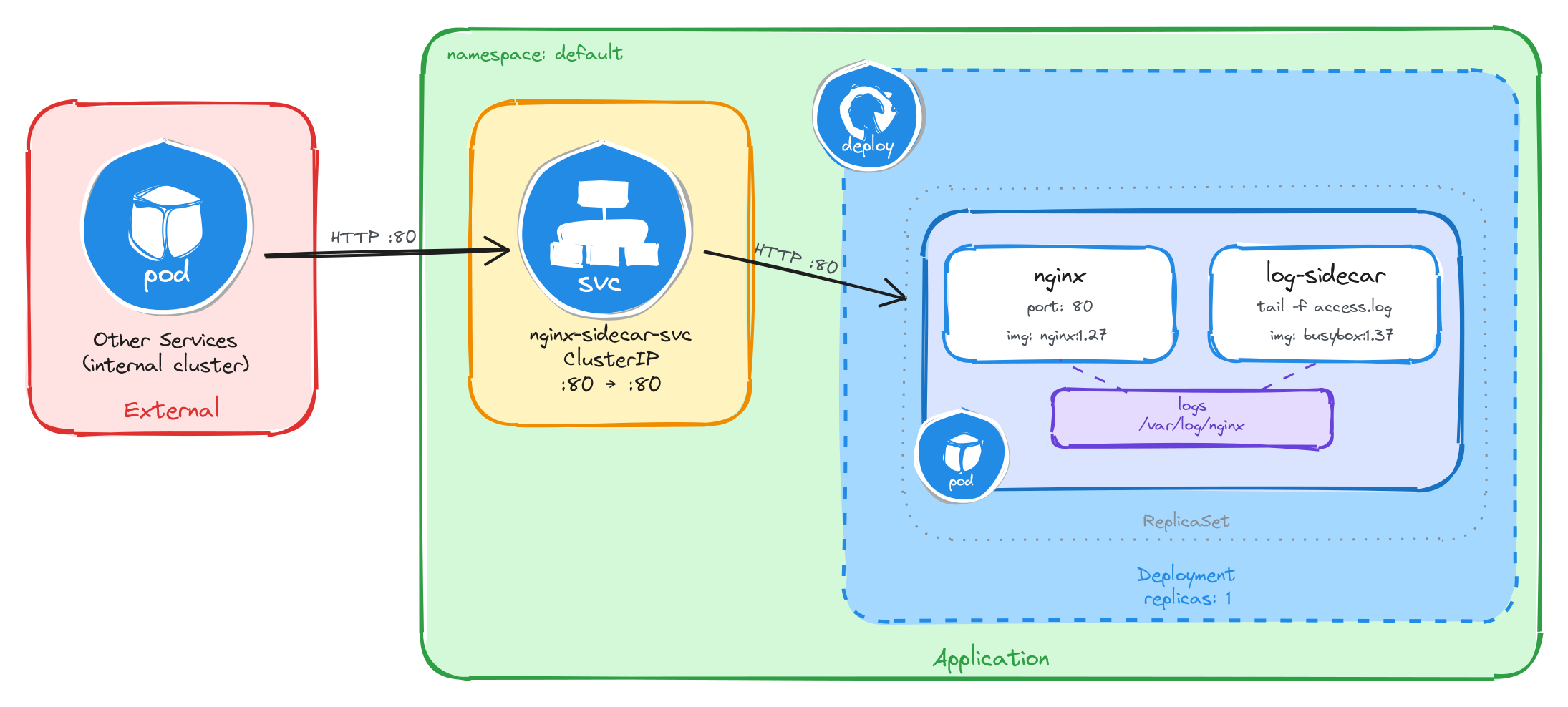

The task requires two containers that share log data, brief downtime is acceptable, and the web server must be reachable only from inside the cluster. These constraints drive four design decisions:

-

A single Deployment with one replica is enough because the application needs two containers in the same Pod, the nginx web server and the busybox logging sidecar. The Deployment creates a ReplicaSet that manages the Pod. If the Pod crashes, the ReplicaSet recreates it automatically at the cost of a short period of unavailability, which the task explicitly allows.

-

The sidecar needs access to nginx’s access logs without execing into the nginx container. A volume mounted at

/var/log/nginxlocation in both containers solves this: nginx writes its access log to the shared volume, and the sidecar continuously reads it withtail -f, streaming entries to its own standard output. This keeps the two containers decoupled: each has a single responsibility and the shared volume acts as the data bridge between them. -

Other services need a stable address to reach the web server. Pod IPs change every time a Pod is recreated, so we place a ClusterIP Service (

nginx-sidecar-svc) in front of the Pod. The Service provides a fixed cluster-internal DNS name and forwards traffic to the nginx container on port80. -

The web server must not be accessible from outside the cluster. A ClusterIP Service has no external port and no route from outside the cluster network, so it satisfies this requirement by design. No Gateway, Ingress, or NodePort is needed.

The diagram shows the resulting architecture: external clients have no path into the application, while internal services reach the web server through the ClusterIP Service, which forwards traffic into the Pod managed by the Deployment. Inside the Pod, the nginx container serves requests and writes access logs to a shared volume, which the logging sidecar reads and streams to standard output.

4.1.2 Implementation

Unlike single-container Pods, multi-container Pods cannot be created with kubectl create deployment alone. We need a YAML manifest to define both containers and the shared volume within the same Pod.

We start by creating a file called nginx-with-sidecar.yaml:

cat <<EOF > nginx-with-sidecar.yaml

With the following content:

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-with-sidecar

labels:

app: nginx-with-sidecar

spec:

replicas: 1

selector:

matchLabels:

app: nginx-with-sidecar

template:

metadata:

labels:

app: nginx-with-sidecar

spec:

containers:

- name: nginx

image: nginx:1.27

ports:

- containerPort: 80

volumeMounts:

- name: logs

mountPath: /var/log/nginx

- name: log-sidecar

image: busybox:1.37

command:

- sh

- -c

- tail -f /var/log/nginx/access.log

volumeMounts:

- name: logs

mountPath: /var/log/nginx

volumes:

- name: logs

emptyDir: {}

EOF

There are a few things to note in this manifest:

- Shared volume: An

emptyDirvolume calledlogsis mounted at/var/log/nginxin both containers. This is how the sidecar reads the log files written by nginx. AnemptyDirvolume is created when the Pod is assigned to a node and exists as long as the Pod is running on that node, making it ideal for sharing temporary data between containers in the same Pod. - Sidecar container: The

log-sidecarcontainer runstail -fon the nginx access log. This means it will continuously stream new log entries to its standard output, where they can be read withkubectl logs. - Single replica: One replica is enough since brief unavailability is acceptable.

To verify the file was created correctly, run:

cat nginx-with-sidecar.yaml

Apply the manifest to create the Deployment:

kubectl apply -f nginx-with-sidecar.yaml

Next, we expose the Deployment as a ClusterIP Service. The Service listens on port 80 and forwards traffic to the nginx container port 80.

kubectl expose deployment nginx-with-sidecar \

--name=nginx-sidecar-svc \

--type=ClusterIP \

--port=80 \

--target-port=80

4.1.2.1 Verify resource creation

To verify that the Pod is running and that both containers are ready, execute the following command:

kubectl get pods -l app=nginx-with-sidecar --watch

The output should look similar to this. Notice that the READY column shows 2/2, confirming that both the nginx container and the log-sidecar container are running:

NAME READY STATUS RESTARTS AGE

nginx-with-sidecar-5d4f7b8c9a-k2m8n 2/2 Running 0 2m

To verify that the Service is configured correctly, run:

kubectl get svc nginx-sidecar-svc

The output should look similar to this:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx-sidecar-svc ClusterIP 10.96.145.203 <none> 80/TCP 1m

4.1.2.2 Test the web server

To test the web server, create a temporary Pod and send a request through the Service:

kubectl run -it --rm --restart=Never busybox --image=busybox sh

Inside the busybox Pod, use wget to access the web server through the Service ClusterIP:

wget -qO- http://nginx-sidecar-svc

The response should be the default nginx welcome page:

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<!-- CSS styles omitted for brevity -->

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<!-- Content omitted for brevity -->

</body>

</html>

4.1.2.3 Verify the sidecar logs

After sending the request above, exit the busybox Pod and verify that the sidecar captured the access log entry. First, get the Pod name:

POD_NAME=$(kubectl get pods \

-l app=nginx-with-sidecar \

-o jsonpath='{.items[0].metadata.name}') \

&& echo $POD_NAME

Then, read the logs from the log-sidecar container using the -c flag to specify which container to read from:

kubectl logs $POD_NAME -c log-sidecar

The output should show the access log entry from the request we made through the busybox Pod:

10.244.0.12 - - [05/Mar/2026:10:30:00 +0000] "GET / HTTP/1.1" 200 615 "-" "Wget"

This confirms that the sidecar pattern is working correctly: nginx writes logs to the shared volume, and the sidecar reads and exposes them through its standard output.

4.2 Task 2: Design and deploy a web server with an error monitoring sidecar

Your team needs an internal documentation portal that serves static content inside the cluster. The security team requires continuous monitoring of all error events generated by the web server for audit compliance, without modifying the web server configuration or accessing its container directly.

The web server must run as an httpd (Apache) container. A second container running busybox must act as an error monitoring sidecar that continuously reads the httpd error log and prints it to its own standard output.

The web server must be reachable from other services inside the cluster through a stable address, but it must not be accessible from outside the cluster.

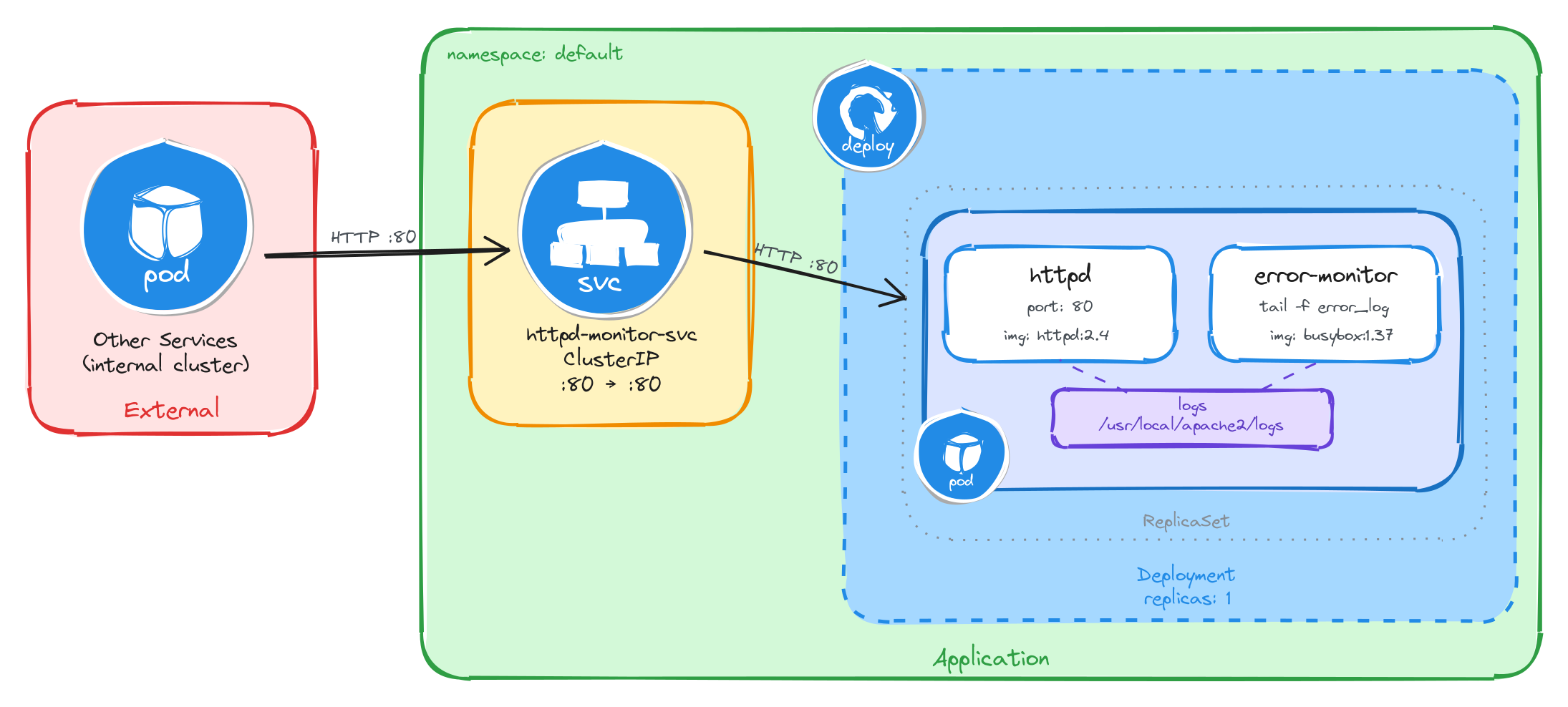

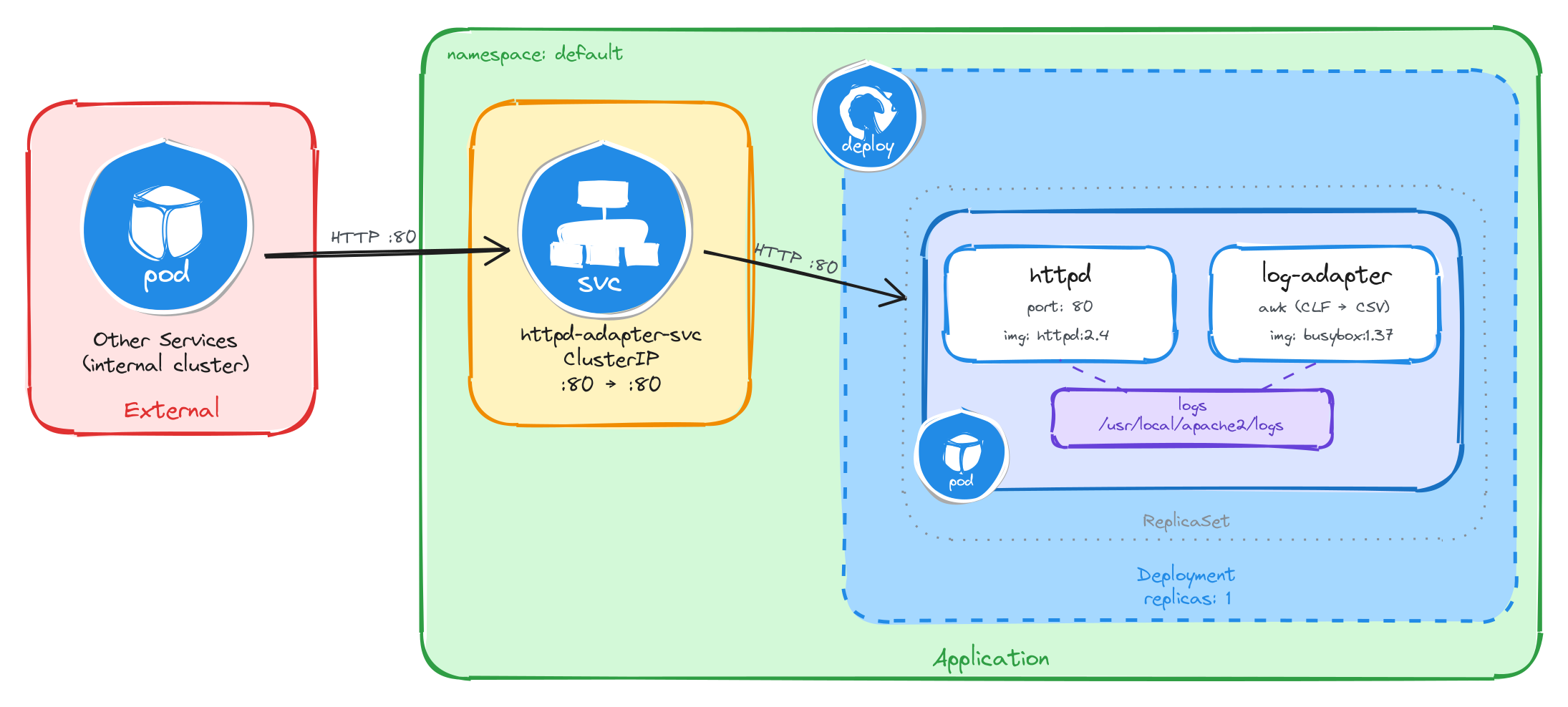

4.2.1 Architectural design

The task requires two containers that share error log data, brief downtime is acceptable, and the web server must be reachable only from inside the cluster. These constraints drive four design decisions:

-

A single Deployment with one replica is enough because the application needs two containers in the same Pod, the httpd web server and the busybox error monitoring sidecar. The Deployment creates a ReplicaSet that manages the Pod. If the Pod crashes, the ReplicaSet recreates it automatically at the cost of a short period of unavailability, which the task explicitly allows.

-

The sidecar needs access to httpd’s error logs without execing into the httpd container. A volume mounted at

/usr/local/apache2/logslocation in both containers solves this: httpd writes its error log to the shared volume, and the sidecar continuously reads it withtail -f, streaming entries to its own standard output. This keeps the two containers decoupled: each has a single responsibility and the shared volume acts as the data bridge between them. -

Other services need a stable address to reach the web server. Pod IPs change every time a Pod is recreated, so we place a ClusterIP Service (

httpd-monitor-svc) in front of the Pod. The Service provides a fixed cluster-internal DNS name and forwards traffic to the httpd container on port80. -

The web server must not be accessible from outside the cluster. A ClusterIP Service has no external port and no route from outside the cluster network, so it satisfies this requirement by design. No Gateway, Ingress, or NodePort is needed.

The diagram shows the resulting architecture: external clients have no path into the application, while internal services reach the web server through the ClusterIP Service, which forwards traffic into the Pod managed by the Deployment. Inside the Pod, the httpd container serves requests and writes error logs to a shared volume, which the error monitoring sidecar reads and streams to standard output.

4.2.2 Implementation

Unlike single-container Pods, multi-container Pods cannot be created with kubectl create deployment alone. We need a YAML manifest to define both containers and the shared volume within the same Pod.

We start by creating a file called httpd-with-monitor.yaml:

cat <<EOF > httpd-with-monitor.yaml

With the following content:

apiVersion: apps/v1

kind: Deployment

metadata:

name: httpd-with-monitor

labels:

app: httpd-with-monitor

spec:

replicas: 1

selector:

matchLabels:

app: httpd-with-monitor

template:

metadata:

labels:

app: httpd-with-monitor

spec:

containers:

- name: httpd

image: httpd:2.4

command:

- sh

- -c

- |

sed -i 's|ErrorLog /proc/self/fd/2|ErrorLog logs/error_log|' \

/usr/local/apache2/conf/httpd.conf

httpd-foreground

ports:

- containerPort: 80

volumeMounts:

- name: logs

mountPath: /usr/local/apache2/logs

- name: error-monitor

image: busybox:1.37

command:

- sh

- -c

- |

until [ -f /usr/local/apache2/logs/error_log ]; do sleep 1; done

tail -f /usr/local/apache2/logs/error_log

volumeMounts:

- name: logs

mountPath: /usr/local/apache2/logs

volumes:

- name: logs

emptyDir: {}

EOF

There are a few things to note in this manifest:

- Shared volume: An

emptyDirvolume calledlogsis mounted at/usr/local/apache2/logsin both containers. This is how the sidecar reads the log files written by httpd. AnemptyDirvolume is created when the Pod is assigned to a node and exists as long as the Pod is running on that node, making it ideal for sharing temporary data between containers in the same Pod. - httpd command override: The official

httpd:2.4Docker image configuresErrorLog /proc/self/fd/2, which redirects error logs to stderr instead of writing them to a file. The sidecar reads from the shared volume, so it needs a file. The httpd container’s command usessedto rewrite that directive toErrorLog logs/error_logbefore startinghttpd-foreground, making httpd write error logs to the shared volume where the sidecar can read them. - Sidecar container: The

error-monitorcontainer first waits forerror_logto exist as httpd only creates the file on startup, and theemptyDirvolume starts empty, sotail -fwould fail immediately without this guard. Once the file appears, it continuously streams new log entries to its standard output, where they can be read withkubectl logs. - Single replica: One replica is enough since brief unavailability is acceptable.

To verify the file was created correctly, run:

cat httpd-with-monitor.yaml

Apply the manifest to create the Deployment:

kubectl apply -f httpd-with-monitor.yaml

Next, we expose the Deployment as a ClusterIP Service. The Service listens on port 80 and forwards traffic to the httpd container port 80.

kubectl expose deployment httpd-with-monitor \

--name=httpd-monitor-svc \

--type=ClusterIP \

--port=80 \

--target-port=80

4.2.2.1 Verify resource creation

To verify that the Pod is running and that both containers are ready, execute the following command:

kubectl get pods -l app=httpd-with-monitor --watch

The output should look similar to this. Notice that the READY column shows 2/2, confirming that both the httpd container and the error-monitor container are running:

NAME READY STATUS RESTARTS AGE

httpd-with-monitor-6b7f9c2d1e-x4p3q 2/2 Running 0 2m

To verify that the Service is configured correctly, run:

kubectl get svc httpd-monitor-svc

The output should look similar to this:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

httpd-monitor-svc ClusterIP 10.96.178.42 <none> 80/TCP 1m

4.2.2.2 Test the web server

To test the web server, create a temporary Pod and send a request through the Service:

kubectl run -it --rm --restart=Never busybox --image=busybox sh

Inside the busybox Pod, use wget to access the web server through the Service ClusterIP:

wget -qO- http://httpd-monitor-svc

The response should be the default Apache welcome page:

<html>

<body>

<h1>It works!</h1>

</body>

</html>

4.2.2.3 Verify the sidecar logs

After sending the request above, exit the busybox Pod and verify that the sidecar captured the error log entries. First, get the Pod name:

POD_NAME=$(kubectl get pods \

-l app=httpd-with-monitor \

-o jsonpath='{.items[0].metadata.name}') \

&& echo $POD_NAME

Then, read the logs from the error-monitor container using the -c flag to specify which container to read from:

kubectl logs $POD_NAME -c error-monitor

The output should show error log entries from the httpd server, including startup messages and any request processing events:

[Wed Mar 05 10:30:00.000000 2026] [mpm_event:notice] [pid 1:tid 1] AH00489: Apache/2.4.62 (Unix) configured -- resuming normal operations

[Wed Mar 05 10:30:00.000000 2026] [core:notice] [pid 1:tid 1] AH00094: Command line: 'httpd -D FOREGROUND'

This confirms that the sidecar pattern is working correctly: httpd writes error logs to the shared volume, and the sidecar reads and exposes them through its standard output.

4.3 Task 3: Design and deploy a Java application server with an access logging sidecar

Your team needs an internal Java application server that hosts backend services inside the cluster. The platform team requires a dedicated stream of HTTP access logs from the application server for traffic analysis and capacity planning, without modifying the server configuration or accessing its container directly.

The application server must run as a tomcat container. A second container running busybox must act as an access logging sidecar that continuously reads the Tomcat access log and prints it to its own standard output.

The application server must be reachable from other services inside the cluster through a stable address, but it must not be accessible from outside the cluster.

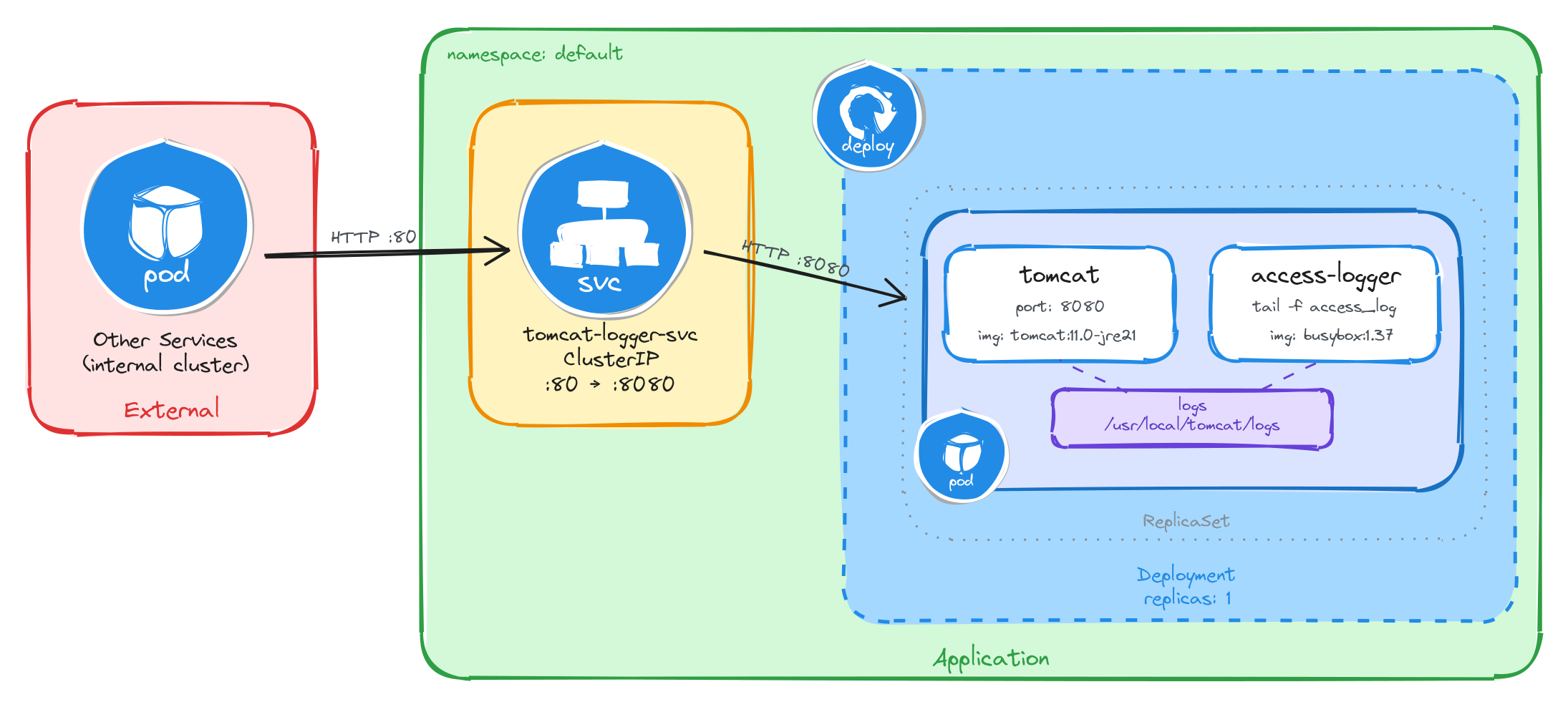

4.3.1 Architectural design

The task requires two containers that share access log data, brief downtime is acceptable, and the application server must be reachable only from inside the cluster. These constraints drive four design decisions:

-

A single Deployment with one replica is enough because the application needs two containers in the same Pod, the Tomcat application server and the busybox access logging sidecar. The Deployment creates a ReplicaSet that manages the Pod. If the Pod crashes, the ReplicaSet recreates it automatically at the cost of a short period of unavailability, which the task explicitly allows.

-

The sidecar needs access to Tomcat’s access logs without execing into the Tomcat container. A volume mounted at

/usr/local/tomcat/logslocation in both containers solves this: Tomcat writes its access log to the shared volume, and the sidecar continuously reads it withtail -f, streaming entries to its own standard output. This keeps the two containers decoupled: each has a single responsibility and the shared volume acts as the data bridge between them. -

Other services need a stable address to reach the application server. Pod IPs change every time a Pod is recreated, so we place a ClusterIP Service (

tomcat-logger-svc) in front of the Pod. The Service provides a fixed cluster-internal DNS name and forwards traffic on port80to the Tomcat container on port8080. -

The application server must not be accessible from outside the cluster. A ClusterIP Service has no external port and no route from outside the cluster network, so it satisfies this requirement by design. No Gateway, Ingress, or NodePort is needed.

The diagram shows the resulting architecture: external clients have no path into the application, while internal services reach the application server through the ClusterIP Service, which forwards traffic into the Pod managed by the Deployment. Inside the Pod, the Tomcat container serves requests and writes access logs to a shared volume, which the access logging sidecar reads and streams to standard output.

4.3.2 Implementation

Unlike single-container Pods, multi-container Pods cannot be created with kubectl create deployment alone. We need a YAML manifest to define both containers and the shared volume within the same Pod.

We start by creating a file called tomcat-with-logger.yaml:

cat <<EOF > tomcat-with-logger.yaml

With the following content:

apiVersion: apps/v1

kind: Deployment

metadata:

name: tomcat-with-logger

labels:

app: tomcat-with-logger

spec:

replicas: 1

selector:

matchLabels:

app: tomcat-with-logger

template:

metadata:

labels:

app: tomcat-with-logger

spec:

containers:

- name: tomcat

image: tomcat:11.0-jre21

ports:

- containerPort: 8080

readinessProbe:

tcpSocket:

port: 8080

initialDelaySeconds: 30

periodSeconds: 5

volumeMounts:

- name: logs

mountPath: /usr/local/tomcat/logs

- name: access-logger

image: busybox:1.37

command:

- sh

- -c

- |

until ls /usr/local/tomcat/logs/localhost_access_log.*.txt 1>/dev/null 2>&1; do

sleep 1

done

tail -f /usr/local/tomcat/logs/localhost_access_log.*.txt

volumeMounts:

- name: logs

mountPath: /usr/local/tomcat/logs

volumes:

- name: logs

emptyDir: {}

EOF

There are a few things to note in this manifest:

- Shared volume: An

emptyDirvolume calledlogsis mounted at/usr/local/tomcat/logsin both containers. This is how the sidecar reads the log files written by Tomcat. AnemptyDirvolume is created when the Pod is assigned to a node and exists as long as the Pod is running on that node, making it ideal for sharing temporary data between containers in the same Pod. - Sidecar container: The

access-loggercontainer waits for the access log file to appear, then runstail -fon it. Tomcat names its access log files with a date suffix (e.g.,localhost_access_log.2026-03-26.txt), so the sidecar uses a wildcard pattern to match the current file. This means it will continuously stream new log entries to its standard output, where they can be read withkubectl logs. - Port mapping: Tomcat listens on port

8080by default, unlike nginx or httpd which listen on port80. The Service will map external port80to the container’s port8080, so internal clients can reach it on the standard HTTP port. - Readiness probe: Tomcat is a JVM-based server and takes longer to start than nginx or httpd. Without a readiness probe, the Pod transitions to

Runningbefore Tomcat is actually accepting connections, causing connection failures. ThetcpSocketprobe with a 30-second initial delay prevents the Service from routing traffic until Tomcat is ready. - Single replica: One replica is enough since brief unavailability is acceptable.

To verify the file was created correctly, run:

cat tomcat-with-logger.yaml

Apply the manifest to create the Deployment:

kubectl apply -f tomcat-with-logger.yaml

Next, we expose the Deployment as a ClusterIP Service. The Service listens on port 80 and forwards traffic to the Tomcat container port 8080.

kubectl expose deployment tomcat-with-logger \

--name=tomcat-logger-svc \

--type=ClusterIP \

--port=80 \

--target-port=8080

4.3.2.1 Verify resource creation

To verify that the Pod is running and that both containers are ready, execute the following command:

kubectl get pods -l app=tomcat-with-logger --watch

The output should look similar to this. Notice that the READY column shows 2/2, confirming that both the Tomcat container and the access-logger container are running. Because Tomcat is a JVM-based server, it may take up to a minute before the Pod becomes fully ready. Wait until READY shows 2/2 before proceeding:

NAME READY STATUS RESTARTS AGE

tomcat-with-logger-4a9e1c7d3b-m6n2p 2/2 Running 0 2m

To verify that the Service is configured correctly, run:

kubectl get svc tomcat-logger-svc

The output should look similar to this:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

tomcat-logger-svc ClusterIP 10.96.211.58 <none> 80/TCP 1m

4.3.2.2 Test the application server

To test the application server, create a temporary Pod and send a request through the Service:

kubectl run -it --rm --restart=Never busybox --image=busybox sh

Inside the busybox Pod, use wget to access the application server through the Service ClusterIP:

wget -qO- http://tomcat-logger-svc

The response should be HTTP 404 page by default as no web application is deployed.

4.3.2.3 Verify the sidecar logs

After sending the request above, exit the busybox Pod and verify that the sidecar captured the access log entry. First, get the Pod name:

POD_NAME=$(kubectl get pods \

-l app=tomcat-with-logger \

-o jsonpath='{.items[0].metadata.name}') \

&& echo $POD_NAME

Then, read the logs from the access-logger container using the -c flag to specify which container to read from:

kubectl logs $POD_NAME -c access-logger

The output should show the access log entry from the request we made through the busybox Pod:

10.244.0.15 - - [26/Mar/2026:10:30:00 +0000] "GET / HTTP/1.1" 404 762

This confirms that the sidecar pattern is working correctly: Tomcat writes access logs to the shared volume, and the sidecar reads and exposes them through its standard output.

4.4 Task 4: Design and deploy a web server with a log adapter sidecar

Your team needs an internal web server that serves static content inside the cluster. The analytics team needs the access logs delivered in CSV format so they can ingest them directly into their data pipeline, but the web server produces logs in Common Log Format (CLF). The log format must be converted without modifying the web server configuration or accessing its container directly.

The web server must run as an httpd (Apache) container. A second container running busybox must act as a log adapter sidecar that continuously reads the httpd access log in Common Log Format, transforms each entry into CSV (ip,timestamp,method,path,status), and prints the result to its own standard output.

The web server must be reachable from other services inside the cluster through a stable address, but it must not be accessible from outside the cluster.

4.4.1 Architectural design

The task requires two containers where the sidecar transforms log data from one format to another, brief downtime is acceptable, and the web server must be reachable only from inside the cluster. These constraints drive four design decisions:

-

A single Deployment with one replica is enough because the application needs two containers in the same Pod, the httpd web server and the busybox log adapter sidecar. The Deployment creates a ReplicaSet that manages the Pod. If the Pod crashes, the ReplicaSet recreates it automatically at the cost of a short period of unavailability, which the task explicitly allows.

-

The sidecar needs to read httpd’s access logs and transform them from Common Log Format to CSV without execing into the httpd container. A volume mounted at

/usr/local/apache2/logslocation in both containers solves this: httpd writes its access log in Common Log Format to the shared volume, and the sidecar continuously reads it withtail -f, pipes each line throughawkto extract the relevant fields, and outputs the result as CSV to its own standard output. This is the adapter pattern: the sidecar converts data from the format the main container produces into the format downstream consumers expect. The shared volume acts as the data bridge between them. -

Other services need a stable address to reach the web server. Pod IPs change every time a Pod is recreated, so we place a ClusterIP Service (

httpd-adapter-svc) in front of the Pod. The Service provides a fixed cluster-internal DNS name and forwards traffic to the httpd container on port80. -

The web server must not be accessible from outside the cluster. A ClusterIP Service has no external port and no route from outside the cluster network, so it satisfies this requirement by design. No Gateway, Ingress, or NodePort is needed.

The diagram shows the resulting architecture: external clients have no path into the application, while internal services reach the web server through the ClusterIP Service, which forwards traffic into the Pod managed by the Deployment. Inside the Pod, the httpd container serves requests and writes access logs in Common Log Format to a shared volume, which the log adapter sidecar reads, transforms to CSV, and streams to standard output.

4.4.2 Implementation

Unlike single-container Pods, multi-container Pods cannot be created with kubectl create deployment alone. We need a YAML manifest to define both containers and the shared volume within the same Pod.

We start by creating a file called httpd-with-adapter.yaml:

cat <<EOF > httpd-with-adapter.yaml

With the following content:

apiVersion: apps/v1

kind: Deployment

metadata:

name: httpd-with-adapter

labels:

app: httpd-with-adapter

spec:

replicas: 1

selector:

matchLabels:

app: httpd-with-adapter

template:

metadata:

labels:

app: httpd-with-adapter

spec:

containers:

- name: httpd

image: httpd:2.4

command:

- sh

- -c

- |

sed -i 's|CustomLog /proc/self/fd/1 common|CustomLog logs/access_log common|' \

/usr/local/apache2/conf/httpd.conf

httpd-foreground

ports:

- containerPort: 80

volumeMounts:

- name: logs

mountPath: /usr/local/apache2/logs

- name: log-adapter

image: busybox:1.37

command:

- sh

- -c

- |

until [ -f /usr/local/apache2/logs/access_log ]; do sleep 1; done

tail -f /usr/local/apache2/logs/access_log | \

awk 'BEGIN{OFS=","} {print $1,substr($4,2),substr($6,2),$7,$9; fflush()}'

volumeMounts:

- name: logs

mountPath: /usr/local/apache2/logs

volumes:

- name: logs

emptyDir: {}

EOF

There are a few things to note in this manifest:

- Shared volume: An

emptyDirvolume calledlogsis mounted at/usr/local/apache2/logsin both containers. This is how the sidecar reads the log files written by httpd. AnemptyDirvolume is created when the Pod is assigned to a node and exists as long as the Pod is running on that node, making it ideal for sharing temporary data between containers in the same Pod. - httpd command override: The official

httpd:2.4Docker image configuresCustomLog /proc/self/fd/1 common, which redirects access logs to stdout instead of writing them to a file. The sidecar reads from the shared volume, so it needs a file. The httpd container’s command usessedto rewrite that directive toCustomLog logs/access_log commonbefore startinghttpd-foreground, making httpd write access logs to the shared volume where the sidecar can read them. - Adapter sidecar: The

log-adaptercontainer first waits foraccess_logto exist — httpd only creates the file on the first request, and theemptyDirvolume starts empty, sotail -fwould fail immediately without this guard. Once the file appears, it runstail -fpiped into a single-lineawkcommand.BEGIN{OFS=","}sets the output field separator to a comma, so theprintstatement separates each field with a comma automatically.substr($4,2)strips the leading[from the timestamp field, andsubstr($6,2)strips the leading"from the HTTP method. Thefflush()call forcesawkto flush its output buffer on every line — without it,kubectl logswould show nothing until the buffer fills up. This is the adapter pattern: the sidecar transforms data from the format the main container produces (CLF) into the format downstream consumers need (CSV). - Single replica: One replica is enough since brief unavailability is acceptable.

To verify the file was created correctly, run:

cat httpd-with-adapter.yaml

Apply the manifest to create the Deployment:

kubectl apply -f httpd-with-adapter.yaml

Next, we expose the Deployment as a ClusterIP Service. The Service listens on port 80 and forwards traffic to the httpd container port 80.

kubectl expose deployment httpd-with-adapter \

--name=httpd-adapter-svc \

--type=ClusterIP \

--port=80 \

--target-port=80

4.4.2.1 Verify resource creation

To verify that the Pod is running and that both containers are ready, execute the following command:

kubectl get pods -l app=httpd-with-adapter --watch

The output should look similar to this. Notice that the READY column shows 2/2, confirming that both the httpd container and the log-adapter container are running:

NAME READY STATUS RESTARTS AGE

httpd-with-adapter-7c8d3e5f2a-r9k1w 2/2 Running 0 2m

To verify that the Service is configured correctly, run:

kubectl get svc httpd-adapter-svc

The output should look similar to this:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

httpd-adapter-svc ClusterIP 10.96.192.71 <none> 80/TCP 1m

4.4.2.2 Test the web server

To test the web server, create a temporary Pod and send a request through the Service:

kubectl run -it --rm --restart=Never busybox --image=busybox sh

Inside the busybox Pod, use wget to access the web server through the Service ClusterIP:

wget -qO- http://httpd-adapter-svc

The response should be the default Apache welcome page:

<html>

<body>

<h1>It works!</h1>

</body>

</html>

4.4.2.3 Verify the adapter output

After sending the request above, exit the busybox Pod and verify that the adapter sidecar transformed the log entry. First, get the Pod name:

POD_NAME=$(kubectl get pods \

-l app=httpd-with-adapter \

-o jsonpath='{.items[0].metadata.name}') \

&& echo $POD_NAME

Then, read the logs from the log-adapter container using the -c flag to specify which container to read from:

kubectl logs $POD_NAME -c log-adapter

The output should show the access log entry transformed from httpd’s native Common Log Format into CSV. Instead of the raw CLF:

10.244.0.12 - - [26/Mar/2026:10:30:00 +0000] "GET / HTTP/1.1" 200 45

The adapter sidecar outputs:

10.244.0.12,26/Mar/2026:10:30:00,GET,/,200

This confirms that the adapter pattern is working correctly: httpd writes access logs in Common Log Format to the shared volume, and the adapter sidecar reads them, extracts the relevant fields, and outputs them as CSV to its standard output for downstream consumers.

4.5 Task 5: Design and deploy a web server with a content sync sidecar

Your team needs an internal status page that displays up-to-date system information inside the cluster. The content must refresh automatically every 30 seconds without restarting the web server. The operations team wants the page to show the current timestamp and hostname so they can verify the content is being updated.

The web server must run as an nginx container that serves whatever HTML files are present in its document root. A second container running busybox must act as a content sync sidecar that regenerates an HTML status page every 30 seconds and writes it to a shared volume where nginx can serve it.

The web server must be reachable from other services inside the cluster through a stable address, but it must not be accessible from outside the cluster.

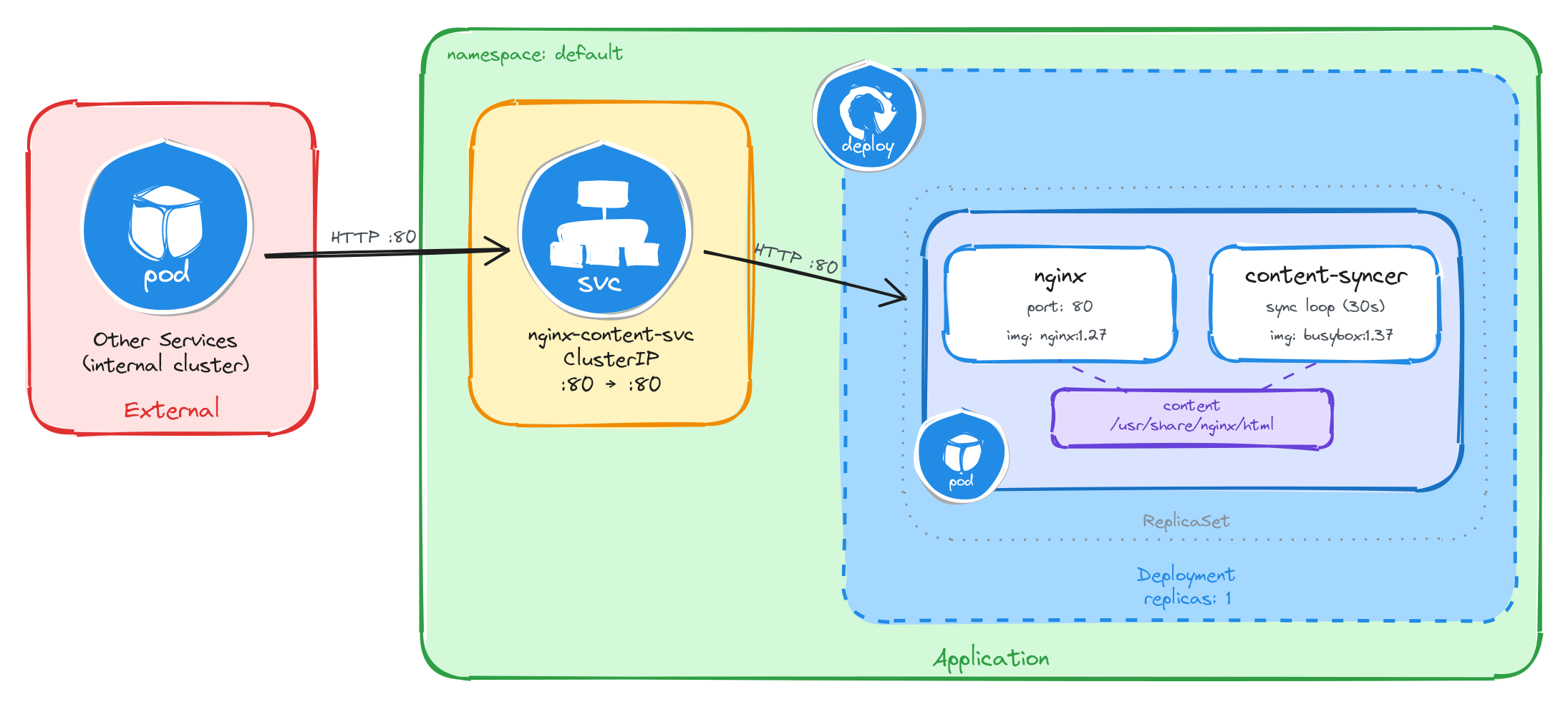

4.5.1 Architectural design

The task requires two containers that share content data, brief downtime is acceptable, and the web server must be reachable only from inside the cluster. These constraints drive four design decisions:

-

A single Deployment with one replica is enough because the application needs two containers in the same Pod, the nginx web server and the busybox content sync sidecar. The Deployment creates a ReplicaSet that manages the Pod. If the Pod crashes, the ReplicaSet recreates it automatically at the cost of a short period of unavailability, which the task explicitly allows.

-

The sidecar needs to provide fresh content to nginx without modifying the nginx container or its configuration. A volume mounted at

/usr/share/nginx/htmlin both containers solves this: the sidecar writes anindex.htmlfile to the shared volume every 30 seconds, and nginx serves it to incoming requests. This reverses the typical sidecar data flow: instead of the sidecar reading from the main container, the sidecar writes content that the main container serves. The shared volume acts as the data bridge between them. -

Other services need a stable address to reach the web server. Pod IPs change every time a Pod is recreated, so we place a ClusterIP Service (

nginx-content-svc) in front of the Pod. The Service provides a fixed cluster-internal DNS name and forwards traffic to the nginx container on port80. -

The web server must not be accessible from outside the cluster. A ClusterIP Service has no external port and no route from outside the cluster network, so it satisfies this requirement by design. No Gateway, Ingress, or NodePort is needed.

The diagram shows the resulting architecture: external clients have no path into the application, while internal services reach the web server through the ClusterIP Service, which forwards traffic into the Pod managed by the Deployment. Inside the Pod, the content sync sidecar regenerates the HTML status page every 30 seconds and writes it to a shared volume, which nginx reads and serves to clients.

4.5.2 Implementation

Unlike single-container Pods, multi-container Pods cannot be created with kubectl create deployment alone. We need a YAML manifest to define both containers and the shared volume within the same Pod.

We start by creating a file called nginx-with-syncer.yaml:

cat <<'EOF' > nginx-with-syncer.yaml

With the following content:

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-with-syncer

labels:

app: nginx-with-syncer

spec:

replicas: 1

selector:

matchLabels:

app: nginx-with-syncer

template:

metadata:

labels:

app: nginx-with-syncer

spec:

containers:

- name: nginx

image: nginx:1.27

ports:

- containerPort: 80

volumeMounts:

- name: content

mountPath: /usr/share/nginx/html

- name: content-syncer

image: busybox:1.37

command:

- sh

- -c

- |

while true; do

echo "<html><head><title>Status Page</title></head><body><h1>System Status</h1><p>Hostname: $(hostname)</p><p>Last updated: $(date -u)</p></body></html>" > /usr/share/nginx/html/index.html

sleep 30

done

volumeMounts:

- name: content

mountPath: /usr/share/nginx/html

volumes:

- name: content

emptyDir: {}

EOF

There are a few things to note in this manifest:

- Shared volume: An

emptyDirvolume calledcontentis mounted at/usr/share/nginx/htmlin both containers. This is how nginx serves the files written by the sidecar. AnemptyDirvolume is created when the Pod is assigned to a node and exists as long as the Pod is running on that node, making it ideal for sharing temporary data between containers in the same Pod. - Reversed data flow: Unlike the previous tasks where the sidecar reads data produced by the main container, here the sidecar writes content that the main container serves. This demonstrates that the sidecar pattern is flexible: the shared volume can carry data in either direction.

- Sidecar container: The

content-syncercontainer runs an infinite loop that regeneratesindex.htmlevery 30 seconds usingechowith$(hostname)and$(date -u)command substitutions. The shell evaluates these at runtime, producing the Pod’s actual hostname and the current UTC timestamp. This means every request to nginx will return a page that was updated at most 30 seconds ago. - Single replica: One replica is enough since brief unavailability is acceptable.

To verify the file was created correctly, run:

cat nginx-with-syncer.yaml

Apply the manifest to create the Deployment:

kubectl apply -f nginx-with-syncer.yaml

Next, we expose the Deployment as a ClusterIP Service. The Service listens on port 80 and forwards traffic to the nginx container port 80.

kubectl expose deployment nginx-with-syncer \

--name=nginx-content-svc \

--type=ClusterIP \

--port=80 \

--target-port=80

4.5.2.1 Verify resource creation

To verify that the Pod is running and that both containers are ready, execute the following command:

kubectl get pods -l app=nginx-with-syncer --watch

The output should look similar to this. Notice that the READY column shows 2/2, confirming that both the nginx container and the content-syncer container are running:

NAME READY STATUS RESTARTS AGE

nginx-with-syncer-3f8a2b6d4c-j7w5t 2/2 Running 0 2m

To verify that the Service is configured correctly, run:

kubectl get svc nginx-content-svc

The output should look similar to this:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx-content-svc ClusterIP 10.96.156.33 <none> 80/TCP 1m

4.5.2.2 Test the web server

To test the web server, create a temporary Pod and send a request through the Service:

kubectl run -it --rm --restart=Never busybox --image=busybox sh

Inside the busybox Pod, use wget to access the web server through the Service ClusterIP:

wget -qO- http://nginx-content-svc

The response should be the dynamically generated status page:

<html>

<head><title>Status Page</title></head>

<body>

<h1>System Status</h1>

<p>Hostname: nginx-with-syncer-3f8a2b6d4c-j7w5t</p>

<p>Last updated: Wed Mar 26 10:30:00 UTC 2026</p>

</body>

</html>

4.5.2.3 Verify the content refreshes

To confirm that the sidecar is regenerating the page, wait at least 30 seconds and send a second request from inside the busybox Pod:

sleep 35 && wget -qO- http://nginx-content-svc

The Last updated timestamp should be different from the first request, confirming that the content sync sidecar is continuously regenerating the page.

This confirms that the sidecar pattern is working correctly: the content-syncer writes fresh HTML to the shared volume every 30 seconds, and nginx serves it to clients.

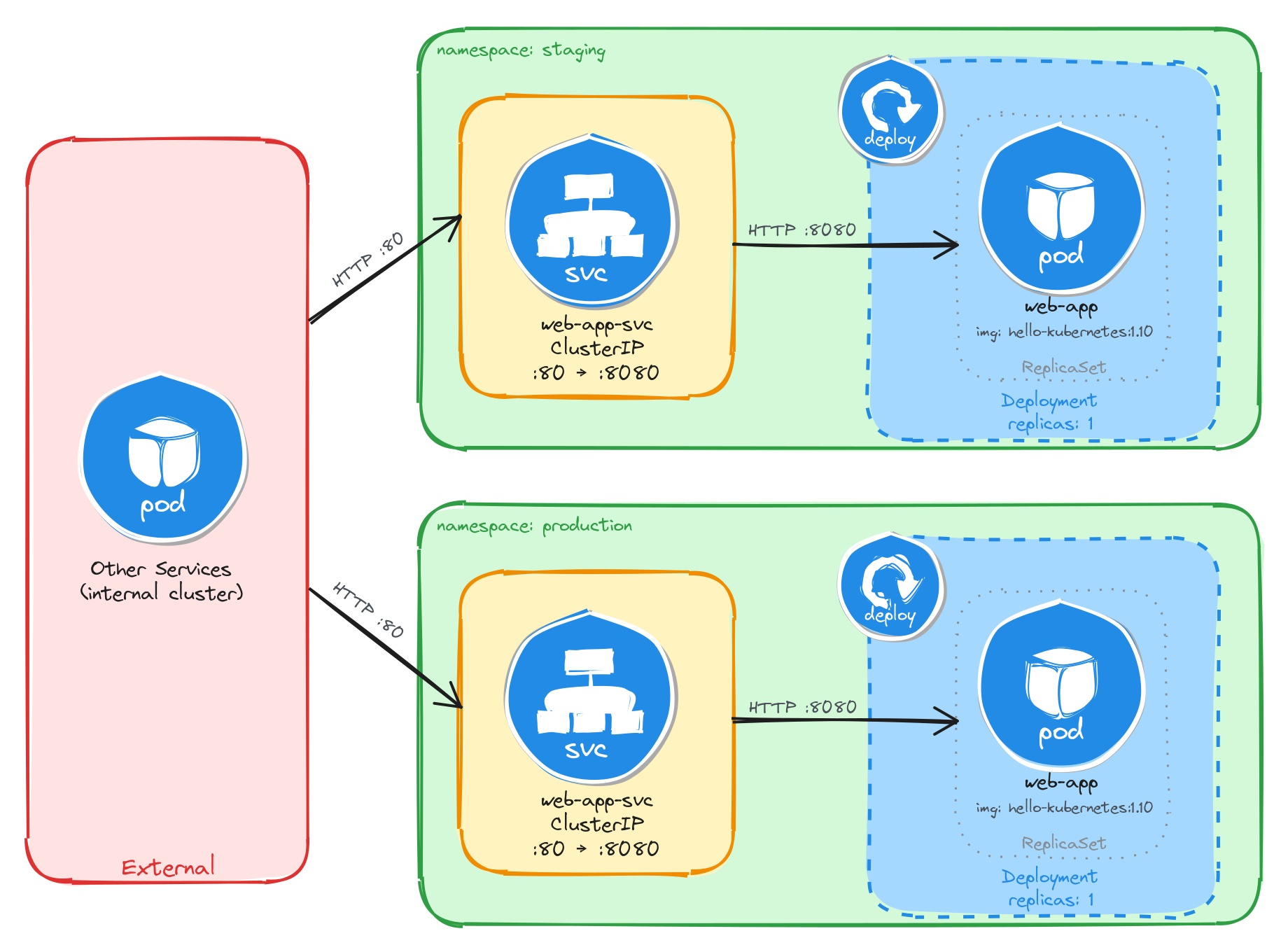

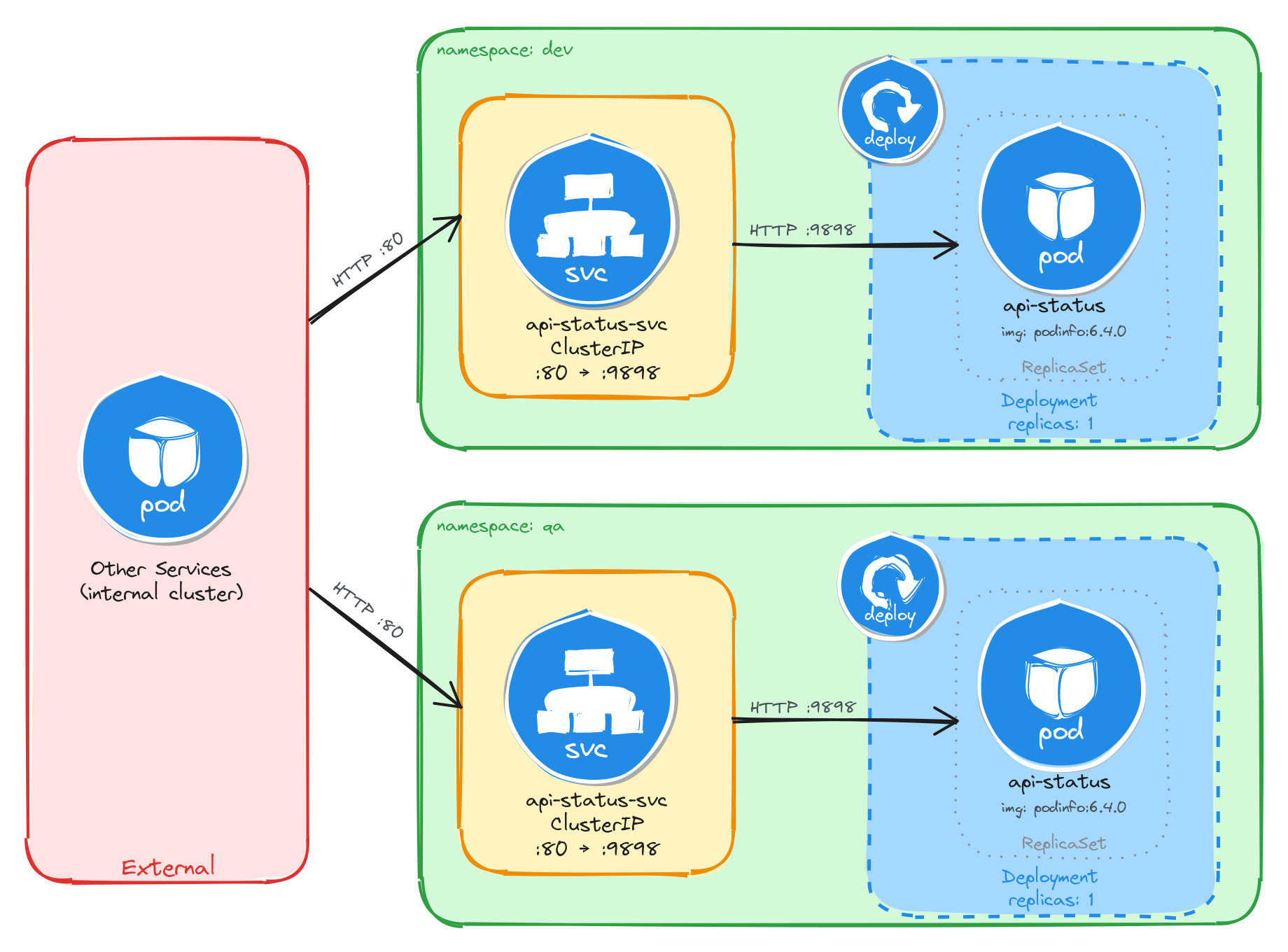

5 Namespace-isolated deployment

Design and deploy the same application with its internal Service into separate Namespaces to simulate staging and production environments.

This category includes the following learning objectives:

- Understanding of Pods.

- Understanding of Deployments.

- Understanding of ClusterIP Services.

- Understanding of Namespace isolation, resource scoping, and deploying objects into specific Namespaces.

5.1 Task 1: Design and deploy a web application in staging and production namespaces